Welcome to Part 3 of the results of a massive 6.5 Creedmoor ammo field test, where I tested multiple boxes of every kind of 6.5 Creedmoor ammo that is marketed as “match” or “target” grade. That included 19 different brands and types of 6.5 Creedmoor ammo! This article is going to cover all the muzzle velocity data that I collected over almost 1,000 rounds fired.

Consistent muzzle velocity is key for long-range shooting, otherwise, bullets that leave the muzzle faster than normal could miss high, or bullets that leave the muzzle slower could miss low. While the goal is for each shot to leave the muzzle at precisely the same velocity, no ammo is perfect. So it is very helpful for us as long-range shooters to understand the variation we can expect from our ammo shot-to-shot.

Many long-range shooters believe the most useful measuring stick for ammo quality is how consistent the muzzle velocity is. Often that’s how I can tell how experienced someone is in shooting long-range: Are they more concerned with getting maximum velocity or finding the most consistent velocity? I’m not saying faster bullets aren’t advantageous, but for precision long-range work that is secondary to consistent velocity.

How much does it take to miss?

Let’s start by putting muzzle velocity variation in context for long-range shooting. A change in velocity of just 30 fps would change your bullet drop by about 8.5 inches at 1,000 yards. A 50 fps variation in MV would put you off-center by 14 inches! That is based on the average ballistics for all of this 6.5 Creedmoor match ammo that I test (avg. MV, avg. bullet weight, avg. BC), and assumes everything else was dead on.

The diagram below shows a shot simulation and hit probability on a 20” target at 1,000 yards for a few different SD’s to give you context for how that might play out in the real world:

A 20″ target is a pretty generous size, but you can use the visual above to estimate what would happen with a smaller target. We should also remember that the simulated shots and hit probability above assumes that our firing solution is absolutely perfect and we also broke the shot exactly where we should have.

To learn more about how much consistent muzzle velocity matters in terms of hit probability at long-range, read How Much Does SD Matter?

Quantifying Muzzle Velocity Variation: ES & SD

When shooters talk about muzzle velocity variation they refer to either Extreme Spread (ES) or Standard Deviation (SD).

- Extreme Spread (ES): The difference between the slowest and fastest velocities recorded.

- Standard Deviation (SD): A measure of how spread out a set of numbers are. A low SD indicates all our velocities are closer to the average, while a high SD indicates the velocities are spread out over a wider range.

If you’re math-averse, please stick with me! This is important, and understanding the basics can seriously help as a shooter. This topic in particular is exactly why I dedicated time to write the “Statistics for Shooters” 3-part series. One of those articles dives into this specific topic and has lots of visuals and explains it in a way that you don’t have to be a math-nerd to understand. If you aren’t familiar with ES or SD, I’d highly recommend you go read “Muzzle Velocity Stats – Statistics for Shooters” so you’ll be able to get the full value from this article.

Here are some key points from that article related to using ES or SD to quantify muzzle velocity:

- SD is a more reliable and effective stat when it comes to quantifying muzzle velocity variations. ES is easier to measure but is a weaker statistical indicator in general because it is entirely based on the two most extreme events.

- It’s probably a bad idea to be completely dismissive of either ES or SD. Both provide some form of insight. An over-reliance on any descriptive statistic can lead to misleading conclusions.

- ES continues to grow as you fire more shots, but the average MV and SD will both begin to converge on the true value as your sample size gets larger.

- While it’s easy to get close to the average muzzle velocity with 10 shots or less, it’s exceedingly more difficult to measure variation and SD with precision. There is a tendency for SD to be understated in small samples. To have much confidence that our SD is accurate, we need a larger sample size than many would think – likely 20-30 shots or more. The more the better!

- It is very difficult to determine minor differences in velocity variation between two loads without a very large sample size (e.g. 40+ rounds). Often we make decisions based on truly insufficient data because the measured performance difference between two loads is simply a result of the natural variation we can expect in small sample sizes.

With all that in mind, I plan to provide both ES and SD for all the data I collected – but I’ll primarily use SD when making comparisons because it is the stronger statistical measure.

What is a good SD?

If you’re newer to the concept of SD, you might be wondering, “What is a ‘good’ SD when it comes to muzzle velocity?”

In my experience, most standard factory ammo that is NOT labeled as “match” or “target” has an SD in the 15-22 fps range. It’s relatively easy for a reloader to produce ammo with an SD of 15 fps, but we typically have to be meticulous and use good equipment and components to wrestle that down into single digits (i.e. under 10 fps). In fact, I’d bet good money that while most reloaders believe they’re producing quality ammo, if they shot a couple of 10-shot strings over an accurate chronograph (e.g. LabRadar, MagnetoSpeed) they’d see an average SD in the 10-15 fps range. I’m not saying all reloaders, but I am saying most. Often reloaders either don’t test their ammo over a chronograph or when they do they may only fire 3-5 rounds over it. Since there is a tendency for SD to be understated in small sample sizes, if they fired 15 more shots they’d likely see their SD fall in that 10-15 fps range – and often on the higher end of that.

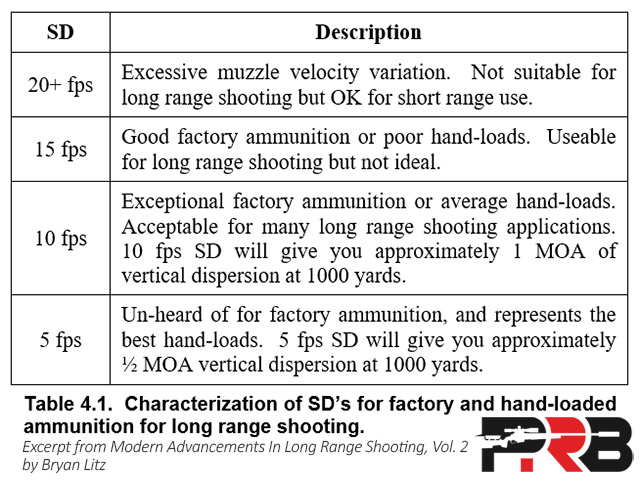

The table below is from Modern Advancements in Long Range Shooting Volume 2 and it provides what Bryan Litz describes as a “summary of what kind of SD’s are required to achieve certain long-range shooting goals in general terms”:

In general, most long-range shooters have a goal to reload ammo with SD’s “in the single digits” (i.e. under 10 fps). However, I’d say in most PRS or NRL-type matches, getting below 10 really isn’t necessary for typical target sizes and distances out to 1,000 yards. I’ve used match-grade factory ammo with SD’s around 12 fps range to place in the top 3 in local PRS matches multiple times. While a match might have one stage with 1 MOA targets out to 1,000 yards – that is rare and doesn’t represent the typical target sizes. It’s far more common to have 1.5-2.0 MOA targets in precision rifle matches. Now, if you’re competing at the highest levels where one miss could be the difference between landing in the top 5 or 15th – everything starts to matter more! Those guys want single digits, but us “normal guys” honestly have weaker links in our chain than our SD being a smidge over 10. I’d say an SD below 14 fps is an appropriate goal for the majority of long-range shooters. That would likely keep your ammo from being one of your biggest limiting factors.

Note: Competitive shooters engaging targets beyond 2,000 yards in Extreme Long Range (ELR), where first-round hits are critical, want to be closer to 5 fps. The further the distance, the more critical consistent muzzle velocity becomes, so the lower the better!

For more context, read How Much Does SD Matter?

How I Gathered Muzzle Velocity Data

Sample Size & Where The Ammo Came From

The stats article explains this in more detail, but just for context if you only fired 5 shots from any of these boxes of ammo and measured one to have an SD of 8 fps and another to have an SD of 15, we’d think we found something meaningful, right? Actually, there is less than a 75% chance that the difference is real, because of the natural variation you can expect in a sample that small. That means there is more than a 1 out of 4 chance that both could have come from the exact same case of ammo and one measured 8 fps and the other 15. It’s simply not a large enough sample size to have any certainty that the results were accurate and repeatable.

In fact, if you are trying to differentiate minor differences like 2-3 fps in SD between loads, you need a sample size of at least 40 rounds! So, that’s why I fired two boxes of each type of ammo – a sample size of 40. At first, I was just thinking of doing one box of each ammo, but if you know stats, you know that could potentially be misleading. I believed that so much that I personally dropped almost $1,000 more to buy a 2nd box of each type of ammo. Now we can have real confidence in the results!

While I could have reached out to companies like Hornady, Berger, PRIME, Federal, and others, and they would have happily sent me discounted or even free ammo for this test – I decided to buy it all for full retail price from popular online distributors. I didn’t even tell any of the manufacturers that I was even doing this test. I paid full retail price for all the ammo to ensure none of it was cherry-picked or loaded “special” for this test. It was all just random ammo off a shelf somewhere. In fact, I ordered one box of each in December 2019 from popular online distributors, and then I waited 6 months and ordered another box of each from a completely different list of distributors. PRIME and Copper Creek only sell direct to customers, so I had to order those from the manufacturer directly. I made sure no manufacturers knew I was conducting this test, and waiting 6 months between orders should ensure the ammo tested is representative of what you can expect.

The Data-Gathering Process

I realize this research could potentially have a significant impact on sales for some of these ammo manufacturers, so I tried to go above and beyond to ensure the data I collected was trustworthy. I’m certainly not “out to get” any manufacturers or to promote any of them either. None of them sponsor the website or have ever given me anything for free or even discounted. There aren’t hidden relationships or agendas here. I’m a 100% independent shooter who is simply in search of the truth to help my readers. So, as always, I tried to identify any significant factors that could skew the results, and then created testing methods that tried to eliminate those or at least minimize them to an acceptable level.

A big part of that for these muzzle velocity results was using 3 LabRadar Doppler Radars to record the shots. If you aren’t familiar with a LabRadar, you should come out from the rock you’ve been living under! 😉 It’s a device that tracks a bullet downrange using Doppler Radar and then analyzes that data to calculate an extremely accurate muzzle velocity. I looked at the underlying log data on the LabRadar for this test, and it looked like each device would typically record 55-85 data points for every shot. So with 3 of them running, that means I recorded around 200 velocity measurements per shot – or 8,000 data points for each type of ammo!

The chart below is a real example of the underlying log data for a single shot during this research. There are 84 black dots, which each represent a data point where the velocity was recorded. You can see the distances each was recorded along the horizontal axis, which included measurements out to 107 yards. I also added a trendline for that data, and the blue dot is what the device calculated the velocity at the muzzle must have been to result in those downrange measurements.

The company that makes the LabRadar is named Infinition, and they’ve been manufacturing high-end instrumentation radars for more than a decade. Infinition’s high-end radars are used daily by professionals at serious research centers, ballistic labs, and proving grounds around the world. So they are experts among experts in this field, and this consumer-grade product includes a lot of the technology and lessons learned from their high-end radars, and brings that technology into the hands of the shooters and hunters. The LabRadar is a big leap ahead of most traditional light-based chronographs in terms of accuracy and reliability. Infinition says the LabRadar has an accuracy of 0.1%.

I went with 3 LabRadar’s for a couple of reasons. My first thought was to mitigate the risk of a bad measurement or device malfunction potentially skewing the results. While I can’t remember experiencing a moment when the device gave a “bad reading,” I felt like it was a good idea to alleviate that concern. I did that by taking the median of the 3 measurements, which means if one of the 3 devices had a reading that was lower or higher than the other 2 devices it had no impact on the results. Honestly, most of the time the measurements were within 1-2 fps on all 3 devices, so this was probably overkill – but at least we don’t have to worry about the recording device skewing the results.

Also, while it’s rare, a single LabRadar can occasionally miss a shot. Having 3 devices ensured at least 2 of them caught every shot – and I can confirm that I got all 780 shots for record measured by at least two LabRadar’s. I was curious how often 1 of the 3 devices didn’t catch a shot, so I did the analysis and all 3 devices captured the velocity 95% of the time.

I was careful in how I set up the devices. I reread through the manual to make sure I hadn’t misunderstood or forgot something. You can explicitly set the transmission frequency of the radar so you can use multiple radars without them interfering with each other. The manufacturer recommends at least 2 channels of separation between devices, I used 4+ channels of separation. I also ensured the devices were all similar distances from the muzzle and aligned them relative to the muzzle as the manufacturer suggests. I also configured the projectile offset on each device to correlate to the distance to the side of the radar, as recommended to optimize precision.

When it came to the rifle and firing process, I used two different rifles. I do personally own a couple of high-end 6.5 Creedmoor rifles, but I know most of my readers aren’t using an $8,000 custom rifle setup. Also, it’s possible that a particular kind of ammo might perform better out of one rifle than another, because of differences in the chamber, barrel, and other mechanical nuances. Since more people use factory rifles than custom rifles, I decided to buy a stock Ruger Precision Rifle (RPR) to use in this test. Again, I bet if I’d have reached out to Ruger they would have gladly loaned me a rifle for this test, but I decided to simply buy a brand new one from GunBroker.com, just like my readers would, to try to ensure it was representative and not a rifle that potentially had been cherry-picked off the line. So that was another out-of-pocket expense for this test, and I hope all of this shows how serious I am about objective testing. I am whole-heartedly in search of the real, unbiased truth to help fellow shooters!

The custom Surgeon rifle featured a 22-inch barrel, and I decided to shoot the test without the suppressor attached (i.e. bare muzzle). I didn’t want to risk it somehow affecting the results if it came loose or somehow the heat caused mirage issues that skewed the group sizes or maybe it was too quiet to trigger the LabRadar on all the shots. The Ruger Precision Rifle came with their stock 24-inch barrel, and I didn’t change a thing about the whole rifle. Both rifle barrels had a 1:8 twist rate.

Another thing I thought about that could potentially skew the results was the barrel condition. So I did these things to mitigate some potential issues related to that:

- First, I “broke in” the new barrel on the RPR by firing 150 rounds down it. In my experience, the velocity has always stabilized by that point, and it had with the Ruger Precision Rifle, as well. The custom 6.5 Creedmoor had just over 2,600 rounds on it when the testing started (yes, I document every round). It was outfitted with a custom Bartlein barrel with a StraightJacket and was chambered by Surgeon Rifles. If you aren’t familiar with the StraightJacket, you should read my massive barrel test research published in Modern Advancements for Long Range Shooting, Vol 2. (If you are reading about this test, I guarantee you will LOVE that book. I find myself referencing it more than any other book – and I’ve read virtually all of them.)

- I ran a cleaning regimen during all testing where I would start with a clean barrel and fire 4 shots that weren’t for record to foul the barrel and ensure all the cleaning solvent was out. Then I would fire no more than 30 shots for record before I cleaned the barrel and repeated the process. That would always be 10 rounds from 3 types of different ammo. The cleaning process and method were consistent throughout testing. I randomized the order that I shot the ammo and changed it between the first and second boxes.

- The barrels were always allowed to cool to ambient temperature between each 10-shot string for record.

So I recorded a 10-shot string from each rifle and each box of ammo. The total sample size for each brand and type of ammo was 40 rounds, which was from two different lots of ammo purchased 6 months apart from different distributors. Then I was OCD and paranoid about every aspect that might skew the results, and I tried to control for those aspects in any way I could think of. Now let’s see the results!

The Results

I will be providing the detailed data, and not just the watered-down summary info or an A/B/C style rating like many magazines tend to do. That is primarily for transparency, but also because I know I’ve attracted a lot of detailed and critical readers – because that’s what I am! I know my subscribers include ballisticians, Olympic and world champion shooters, statisticians, and many professional researchers within the small arms community. You guys are smart people (honestly, it’s a bit intimidating knowing those people are reading), so my approach is always to present the facts and let you guys draw your own conclusions. This test is no different. Don’t worry – you’ll get exhaustive detail in the next post!

Having said that, I know some guys just want to see the head-to-head comparison, so before we dive into all the details for each type of ammo tested, we’ll cut to the chase and start by looking at the overall summary of performance when it comes to muzzle velocity.

As we already established, SD is a more reliable and effective stat than ES when it comes to quantifying muzzle velocity variations, so that is what I’m going to focus on. (But don’t worry, I’ll provide the measured ES’s too in the detailed data.) The chart below shows what the average SD was for each of the four 10-shot strings that I fired with each type of ammo. That is based on a total sample size of 40 rounds for each type of ammo. I also calculated the stats with a different approach where I normalized the velocity by rifle and lot of ammo, and then calculated the SD over all 40 shots in one sampling – and the results of both methods were virtually identical (average difference was 0.1 fps). I thought the average SD of the four 10-shot groups is a bit more straightforward and easy to understand, so I ranked them based on that method below.

The Best 5

The Federal Premium Gold Medal 140 MatchKing ammo had the lowest SD at just 8.6 fps! Considering that was a sample of 40 rounds in two different lots of ammo from two different rifles – that is an absolutely stunning performance. Federal, I tip my cap to you for that performance over multiple boxes of ammo. Wow!

Sig was not far behind in 2nd with their 6.5 Creedmoor Elite Performance Match 140 OTM ammo also posting an SD in the single digits – at just 9.1 fps! Considering that is mass-produced factory ammo, that is exceptional. I would suspect both are more consistent than the ammo that the majority of reloaders produce, even after investing a ton more time per round. I’m not saying super-OCD handloaders with good equipment can’t top it – but that is absolutely match-worthy ammo!

Behind those top 2 were the Berger triplets taking 3rd, 4th, and 5th with SD’s from 10.6 to 11.4 fps. I tested 3 different types of Berger Match ammo, each loaded with a different bullet: the 120 Scenar-L, 130 OTM Tactical (Hybrid), and the 140 Hybrid. Those 3 types of Berger match ammo landed neck-and-neck with just 0.8 fps of variance in their overall SD’s after 40 rounds – even over two lots of ammo. How is that for consistency?!

Remember what expert ballistician and long-range expert Bryan Litz said about ammo with an SD around 10 fps: “Exceptional factory ammunition … Acceptable for many long range shooting applications. 10 fps SD will give you approximately 1 MOA of vertical dispersion at 1000 yards.” I agree with Bryan. The factory ammo in this top 5 is exceptional!

The Middle 10

In the middle of the pack is a long list of other types of ammo with SD’s between 12.3 and 15.2 fps. In fact, 10 of the 19 types of ammo landed in that band that was just 3 fps wide. Before this research, I had shot literally thousands of rounds of match-grade factory ammo, and I would have guessed that 12-15 fps SD’s were pretty typical based on my experience – and it looks like that is true for the majority of the ammo tested. However, there were a few clear outliers both above AND below that range where the bulk fell.

Here are a couple of interesting notes from this middle group:

- All of the Hornady 6.5 Creedmoor Match ammo landed in this group with SD’s ranging from 12.7 to 15.0 fps. That is just a 2.3 fps spread among all 3 types of Hornady ammo tested (120, 140, and 147 gr. ELD-M’s).

- Both types of Copper Creek custom-loaded ammo also landed in this group, although they were both a little better at 12.3 and 13.6 fps.

Remember what Bryan Litz said about ammo with an SD around 15 fps: “Good factory ammo or poor hand-loads. Useable for long range shooting but not ideal.” So these should all be considered good ammo when it comes to consistent muzzle velocity. If some of the ammo from this middle pack prints tight groups and/or has a higher BC bullet and/or leaves the muzzle a little faster – it’s plausible that they could have a higher hit probability at long range than even those in the top 5 with the lower SD’s. We’ll just have to wait to see how it all shakes out when we put it all together.

The Bottom 4

After firing 760 rounds for record, there were 4 types of ammo with SD’s of 17 fps or more:

- Winchester Match 140 MatchKing: Average SD = 17.0 fps

- Nosler Match 140 Custom Competition: Average SD = 17.9 fps

- Nosler Match 140 RDF: Average SD = 19.9 fps

- Remington Match 140 OTM: Average SD = 21.2 fps

There is no way around it: Those SD’s are too big to be considered “match-grade.” The average Extreme Spread (ES) of those over 10-shot strings was 56-68 fps, which definitely makes it hard to hit anything at distance. For context, 60 fps of muzzle velocity difference equates to 17” of vertical drop at 1,000 yards for the average 6.5 Creedmoor ammo tested. Here is what Litz said about ammo with an SD of 20 fps or more: “Excessive muzzle velocity variation. Not suitable for long range shooting.” I know this isn’t going to make those companies happy – but I agree. Consistent muzzle velocity is critical to long-range shooting, and it looks like these brands simply failed the test.

Predicting The Future

Okay, before we move on – I did want to say one thing. Some might think this academic, but I think it’s important. The chart below shows the range of SD’s that statistics would say there is a 90% chance that the true SD of the population would fall into. What do I mean by “the true SD of the population”? Well, we know exactly what the SD was for the 40 shots I fired of each type of ammo – but we will never fire those same shots. In fact, I’ll never fire those same shots again even from the same rifles! Since those were in the past we can quantify the variance with absolute precision – even out to the 5th decimal place if we wanted. But, if we ran this test from start to finish again with new boxes of ammo – would we get the same SD’s out to the 5th decimal place? No.

“Just because we can measure or calculate something to the 2nd decimal place doesn’t mean we have that level of accuracy or insight into the future! We can only speak in terms of absolute, precise values about shots fired in the past. When we’re trying to predict the future, we can only speak in terms of ranges and probabilities.” – from Statistics For Shooters Part 1 – Predicting The Future

When I refer to “the true SD of the population,” I’m talking about predicting what the overall SD would be if we fired 100,000 rounds of each type of ammo. See now we are talking about the future, not the 40 rounds that I shot in the past. This is precisely where statistics can help us get valuable insight!

With that in mind, the chart shows a blue dot which is the measured SD for each 40-shot sample, but then there is a range on both sides of that point representing where the SD of the population might fall. I chose a 90% confidence level for these calculations, which basically means if we bought 10 cases of ammo we could reasonably expect the ammo in 9 of those cases to have an SD inside that range and 1 might fall outside of that range. There is a 5% chance it would fall above the top arrow and a 5% chance it’d fall below the bottom arrow, leaving us 90% right in the middle.

You can see that there is considerable overlap between a lot of these types of ammo. Look at all of those where the blue dot is between 13 and 15 fps, which is the Copper Creek 140 to around the PRIME 130 ammo. The majority of those ranges overlap, which means you can’t have much confidence that there would be a real difference between those over the long haul. I realize that I’m kind of undermining my own test results by saying that, but I’m more interested in helping you guys have real insight than propping up my research as “definitive.”

With this in mind, I think of this as “classes of ammo.” Those first two types of ammo (Federal 140 and Sig) seem to largely be in a class of their own, at least based on our 760 round sample size. Then 3 types of Berger ammo is somewhere between those and that big middle group that included 10 types of ammo. Then you have those on the tail end that start creeping up pretty high. Now there is still considerable overlap between say the Hornady Match 140 ELD-M ammo and the PRIME Match ammo, so maybe those wouldn’t turn out to be so different over a larger sample size – or maybe the true SD of the population for the PRIME ammo would end up at the bottom of its range and the Hornady would be at the top of its range – in which case they’d reverse order. So those with significant overlap could be a toss-up, depending on the sample you happened to get. But, with all that said – the Sig ammo is almost certainly going to always beat the Nosler ammo (assuming neither of them changes their components or processes).

If you are wondering how to get more confidence and/or tighter ranges, I want those too! The problem is you have to shoot A LOT more ammo. As an example the Hornady 147 gr. ELD-M ammo had a measured SD of 13.7 fps a 90% confidence range of 11.6-16.9 fps based on our 40-shot sample. If we would have fired 100 rounds with that same SD that would just shrink the range to 12.3-15.5 fps. If we’d have shot 200 rounds with the same SD we could say with 90% confidence the true SD of the population would be between 12.7 to 14.9 fps. So even if we fired a whole case of every type of ammo and burned out multiple barrels doing the research – we would still have a fairly significant range with overlap between some of these.

Now, let’s go the other direction: What if we’d only fired 10 rounds and got the same 13.7 SD? We would only be able to say the true SD of the population is somewhere between 10.0-22.5 fps! That is a massive range! See why I decided to shoot 40 rounds of each type of ammo? (Also, see why you should fire more than one 10-shot string to see what your SD really is?) I was trying to shrink the ranges as much as I could – without having to refinance my house to pay for the ammo! It’s always a balance of confidence and further investment and time in testing, but I hope this gives you guys context for how to interpret the results. 😉

Average Muzzle Velocity

Now let’s do one more head-to-head comparison, and that is simply the average muzzle velocity. These were all 6.5 Creedmoor match-grade ammo but the bullet weights ranged from 120 to 147 grains – so the muzzle velocities had a pretty wide range. In fact, there was just over 350 fps difference in the average MV – even when shot from the same rifles!

Here are the overall average muzzle velocities that I recorded over the 40 rounds, and they’re grouped by bullet weight:

Here are a few interesting things that stuck out to me about the chart above:

- The biggest outlier is the Berger Match 120 gr. Lapua Scenar-L ammo, which was 112 fps faster than the Hornady Match 120 gr. ELD-M ammo.

- The Berger 140 Hybrid ammo has the same MV as the PRIME 130 ammo – even though the bullet weighs 8% more.

- The Black Hills 147 gr. ELD-M ammo had an average MV that was 77 fps faster than the Hornady ammo loaded with the same bullet.

- The Sig ammo that had crazy consistent muzzle velocity (9.1 fps SD) was one of the slowest compared to all the rest of the ammo using a 140 gr. bullet. In fact, the Berger 140 ammo was 124 fps faster! I wonder if that increase in speed more than makes up for the slight difference in SD in terms of hit probability at long range? We’ll definitely learn the answer to that in a subsequent post!

As I mentioned at earlier in this article, veteran long range shooters are more concerns with the consistency of their muzzle velocity than maximizing their muzzle velocity. If they can find something more consistent that is 50 fps slower, that is what the majority would decide to go with. There was a time that “flat-shooting cartridges” were all the rage, but with the ballistic engines we have now you can use a slower, more consistent velocity and it just means you dial a couple clicks more – but you have a higher hit probability because you have less vertical stringing. I say all that so we don’t put too much weight on the overall muzzle velocity here, although if you can get it fast and consistent that would be having your cake and eating it too.

For more context on this, check out How Much Does Muzzle Velocity Matter?

Overall Summary

Finally, here is the overall summary showing the highlights for each type of ammo in tabular format. It includes the average 10-Shot SD and average muzzle velocity that I shared above, but it also includes the average 10-shot ES and the difference in average velocity between the two boxes.

Any Correlation to Consistency of Physical Measurements?

In the last article, I published data about physical measurements of the loaded rounds to see how consistent they were in terms of overall length and weight, and I also measured how concentric they were (i.e. how much bullet runout the loaded rounds had). I wanted to measure all of that stuff because as reloaders we can often obsess about those kinds of things, and we tend to believe those things are highly correlated to performance – meaning the more consistent the ammo measures the better it will perform. I thought it’d be interesting to see if some type of ammo was good or bad in one of those aspects, did that clearly translate to performance in the field when it came to consistent muzzle velocity or group sizes? So let’s take a quick look at that.

In the last article, we saw that both the Remington and Nosler 140 RDF rounds that I took apart had the most variance in powder charge weight – and those two also had the most inconsistent muzzle velocity of the 19 types of ammo tested. But, remember I didn’t buy an extra box of all 19 types of ammo to deconstruct and measure the individual components. I did weigh loaded rounds of all 19 and charted that variance, but I only deconstructed 7 types of ammo.

But, while we might want to celebrate a clear correlation there, it isn’t quite that clear. The measured standard deviation in powder weight for the Berger Match 140 Hybrid ammo was very similar to the Remington (0.17 and 0.20 grains, respectively), but the Berger Match 140 Hybrid ammo ranked in the top 5 in terms of muzzle velocity consistency. So it seems like if there was a strong correlation between powder weight variance and muzzle velocity consistency those would have more similar performance – but they didn’t.

Another thing that muddies the waters is the Copper Creek 144 LR Hybrid ammo had the most consistent powder weight measurements. Its SD was just 0.09 grains, compared to the 0.17 grains of the Berger 140 Hybrid – so you’d think it should have had super-consistent velocity. The Copper Creek ammo did finish in the top half, but outside of the top 5 and the Berger 140 had a slightly lower SD even though the SD in powder charge weight was almost twice as much.

What if we look at both the brass weight variance and the powder weight variance – would that complete the picture? The brass weight variance of the Copper Creek ammo was the highest (loaded with Hornady brass), so maybe those things offset each other. In fact, when you look at the variance in total weight of the loaded rounds, we saw that the Sig match ammo and the Berger 130 Hybrid ammo were most consistent – and sure enough, those were some of the top performers here for muzzle velocity consistency! But the 3rd most consistent by measured weight was PRIME and it finished slightly below average in terms of muzzle velocity consistency. The Nosler 140 RDF ammo actually ranked 6th in terms of weight consistency of loaded rounds, but it was one of the very worst in terms of muzzle velocity consistency with an SD of 19.9 fps.

So at least from my perspective, there isn’t a strong correlation between those physical measurements and how consistent the muzzle velocity was. Now, if I’d have taken apart all of the rounds and had a better breakdown between the powder weight and brass variance, maybe a combination of those would have some correlation, but at least based on the data I collected, it seems like there is too much noise to make any claims about correlation. That is very interesting to me, because don’t we all obsess about those things when we’re reloading? Maybe the relationship is just more complex than I’m able to untangle. If anyone else has any insight into this, please leave a comment and enlighten us all!

Up Next

Alright! That’s it for the summary and head-to-head velocity comparisons. The very next post will share the exact details I collected for each type of ammo, along with some interesting nuances I discovered with many of them.

The next post has been published and can be viewed here: Live-Fire Muzzle Velocity Details By Ammo Type

If you’d like to be the first to know when the next article is published, sign up to receive email notifications about new posts.

6.5 Creedmoor Match Ammo Field Test Series

Here is the outline of all the articles in this series covering my 6.5 Creedmoor Match-Grade Ammo Field Test:

- Intro & Reader Poll

- Round-To-Round Consistency For Physical Measurements

- Live-Fire Muzzle Velocity & Consistency Summary

- Live-Fire Muzzle Velocity Details By Ammo Type

- Live-Fire Group Sizes & Precision

- Summary & Long-Range Hit Probability

- Best Rifle Ammo for the Money!

Also, if you want to get the most out of this series, I’d HIGHLY recommend that you read what I published right before this research, which was the “Statistics for Shooters” series. I actually wrote that 3-part series so my readers would better understand this ammo research that I’m presenting, and get more value from it. Here are those 3 articles:

- How To Predict The Future: Fundamentals of statistics for shooters

- Quantifying Muzzle Velocity Consistency: Gaining insight to minimize our shot-to-shot variation in velocity

- Quantifying Group Dispersion: Making better decisions when it comes to precision and how small our groups are

PrecisionRifleBlog.com A DATA-DRIVEN Approach to Precision Rifles, Optics & Gear

PrecisionRifleBlog.com A DATA-DRIVEN Approach to Precision Rifles, Optics & Gear

Cal, well done as always. Regarding the non-correlation of powder weight and mv, I didn’t really expect any correlation there, if at all. As handloader we – at least some of us – next to the powder weight take also care of the brass. Not only brass weight but also neck thickness to reach a consistent neck tension, crucial to mv from my experience. This, most likely, will not happen for most of the mass produced ammunition, simply because it would be too costly. Consequently, my hypothesis is that there are variances in the brass used by the different manufacturers – something common and known. My 2 cents.

Chris

Chris, I couldn’t agree more. I think the quality and consistency of the brass might be the biggest factor that plays into consistent muzzle velocity. I’d lump consistent neck tension in that group too, since I see all of that as being related.

I have an idea for a few tests that I hope to get to one day to research that further and try to gather some hard data on it. It’d be fun to see if that hunch plays out in empirical data. I’d suspect it would, but I’m always surprised when you actually go to measure something in a controlled way. But, that’s what happens with real research. Often times it results in even more questions than it answers! 😉

Thanks for the kind words. Glad you enjoyed the content. This muzzle velocity data was especially interesting to me. It was fun for me to analyze and talk about.

Thanks,

Cal

Cal, I totally agree. Once started, results often bring up unexpected questions. For ammo, this is so fascinating, because it is apparently a very simple topic, but if you get into the details it becomes quite complex due to the high amount of variables.

With your results suggesting that there is absolutely match worthy factory ammo, I can’t wait to see what the results are with regards to precision and consequently accuracy. Ultimately, that’s what counts. This however will not only depend on the ammo, but also is impacted by the rilfe. My expectation for your next article would be, that there is a “winner” for precision, however the result could well be rifle specific. This experience was what had me start with loading. I was tired of buying tons of different factory ammo to find one that works well in my specific rifles.

Thanks again for your effort,

Chris

Christian, you couldn’t have led into my article on the group size results any better! Being able to tune a load for a specific rifle was exactly what got me into reloading too. That was almost 20 years ago, and I was still using a $350 270 Winchester rifle that I had bought used at a pawn shop when I was 12 years old. That rifle was likely made in the 1970’s, and tuning a load for it did tighten up some groups. But, with the advances in machining and barrel quality that we have experienced in the last few years I think it might be becoming more rare that you can make a significant improvement by tuning a load. I’m not saying it’s impossible, but on that 270 I might have been able to improve it by 30-40% – but with a modern rifle like this Ruger Precision Rifle I might be surprised if I could improve on the precision by more than 10% with handloads – if that.

I’m not sure if you read the Statistics for Shooters series and I don’t mean to continue to harp on this, but I think one of the mistakes we often make as shooters is that we shoot a few groups with different loads and we notice that one is a lot smaller than the others. We think we really uncovered something that is “tuned” for that rifle. As humans, we naturally tend to see patterns everywhere, but that can often lead to us finding meaningful patterns in meaningless noise. Statistics is a tool that can help us differentiate between true patterns and meaningless noise. And when we start making statistically sound judgments, you may find that many loads produce statistically similar results and loads, in general, are not as finicky as conventional wisdom would lead us to believe.

I will go into that more in the article with the group sizes, including how I went about quantifying that for each type of ammo in a way that I thought would lead to the most “statistically sound judgment”. Honestly, studying and writing about all this has changed the way I develop loads and what I think reality is when it comes to tuning a load for a rifle. I knew that I had a lot of smart readers that would question my research, so I dug and tried to learn the best way to analyze it and learned a lot along the way. It’s resulted in a paradigm shift for me. The refresher on statistics and thinking through how they apply to all this has been super-helpful for me personally, and I hope that I’m helping a lot of other shooters get perspective on that stuff. I think the group size post will definitely take that a step further, so stay tuned!

And as far as the group size determining the “winner” – man it’s a combination of a lot of things. Sometimes it’s easy to think that group size trumps everything else, or that consistent muzzle velocity is everything. But it’s a combination of all those things, along with the BC of the bullet and a few other factors that determine long-range hit probability. There is an advanced analytics tool that I plan to use in the last article in this series to help us put it all together, and I’ll use all this research data that I gathered as inputs to that analysis tool and show what the calculated hit probability is for each type of ammo at various target sizes and distances. At the end of the day, we just want to be able to hit targets at long range, so I feel like that is the best way to determine which is the “winner.” It definitely weights all the data in the most realistic way, so we don’t put too much weight on either muzzle velocity or group size.

Thanks for your comments, Chris.

Thanks,

Cal

Hi – wow- a lot to review. The reason this article hits home is that (like many) I don’t reload. But unfortunately I don’t shoot a 6.5 but shoot a 6xc and .260 Remington.

With the .260 i shot Gorilla 130 Berger Hybrids – couldn’t find any so ordered Copper Creek 140’s. Now, based on your info it looks like I should try the Federal Gold but it is only available in SMK 142 BTHP. So who knows – guess I’ll have to buy 2 more Labradars.

I get my 6xc directly from Tubb and assume ? That I am buying something between a factory load and a hand load but again who knows.

Not hand loading I just want to eliminate some of the unknowns and your work helps.

As a side de-railment I decided to venture down the ELR path. A few years ago at one of the AB seminars in Utah I spoke with Bryan and Mitch Fitzpatrick and they got me excited about the .375 EnABELR. I ordered 300 rds – no gun yet.

Again – because I don’t load what got me hyped up was they were making rounds available through them. Peterson – 407 Berger’s. So I jumped – ordered a JJRock XL action. A year later it comes in – John Kalil says it has one of the best barrels he has seen from Bartlein. Topped with an ATACR 7-35 and a Charlie Tarac I should be good to go.

Now – AB has stopped supplying loaded rounds so I am “big hat – no cattle”. Bought a few hundred Peterson cases – 379 and 407 Berger’s – VV 24n41 (based on Mitch recommendation). Now I have to find someone to load them – back to square 1.

Those of us who for whatever reason don’t load should appreciate your research. My thinking was that shooting a lot of rounds in all kinds of conditions would be more beneficial than taking time to hand load.

Also – are you going to include 40 of your own loads for comparison??

Thoughts?

Thanks, Charlie! You are exactly the kind of guy I had in mind when I was doing this. There are a ton of people just like you that don’t load their own ammo, and I couldn’t agree with you more when you said, “My thinking was that shooting a lot of rounds in all kinds of conditions would be more beneficial than taking time to hand load.” You are absolutely right! At least that would be the right call for the overwhelming majority of shooters. If you aren’t shooting Extreme Long Range (ELR) or aren’t one of the top 100 shooters in the country – then I can almost guarantee you’d be better served doing what you said. In fact, I have the empirical data to back up that claim! That was the conclusion I feel like the data pointed to in the “How Much Does It Matter?” series I did a couple of years ago. I have a lot of friends that talk to me about getting into reloading, and I tell most of them, “Don’t do it!” Factory ammo has gotten so good over the past several years. And at least until the great ammo shortage of 2020/2021 I would claim that you wouldn’t save money doing it (because of the equipment and component cost … see this post for an analytical breakdown of that: The Cost of Handloading vs. Factory Ammo) or might not even produce better than some of this factory ammo. Lots of people think they do, but I really can guarantee that they’re not shooting large enough strings to really know what their SD is or they are filtering out some of the shots (like throwing out the first shot or the highest/lowest or ignoring a string that might have had a high SD and only telling people about the really tiny one they got over a very small sample size). It harder to get in the single digits than you might think, at least over a sample size of 20 shots or more.

Sorry for the rant, but I think you hit on exactly what I’m hoping a lot of people take away after seeing this. You don’t have to reload to shoot long range! That was true 15 years ago, but isn’t any more. Now obviously you have to pick good ammo (and not all of these fall into that category), but that’s what I’m hoping to help people sort through so they can find success without having to try all this themselves.

Also, I would suspect that the overarching findings from this research project could be broadly applied to other cartridges. For example, I’d expect that most of Nosler’s ammo would have higher SD’s than other brands. I don’t know that, so I can’t say that for certain … but it seems like a reasonable assumption based on this data. I’d also expect Berger’s loaded ammo to be one of the top performers for other cartridges, as well. Again, we are making some assumptions there and we’d have to see the data to know for sure – but I don’t think it’s a stretch. So I think you’re on the right track thinking about trying some of the better performing brands in your other caliber.

Tubb’s ammo is excellent. I have personally bought some ammo from him for my old 6XC and it was really good. I actually think he uses a Prometheus powder scale to load that (or that’s what I’ve heard). If he weighs every powder charge, I would expect it to be one of the top performers. I’d consider it custom loaded ammo, and high-end custom loaded ammo at that.

Your ELR setup sounds killer. I’m serious. Those are some of the exact equipment decisions I’d make. You’re going to really enjoy that. And your “big hat no cattle” comment made me laugh out loud. I hadn’t heard that before, but I totally get what you’re saying. I hate to hear that AB isn’t offering ammo any longer. I had some of their ammo for a 375 CheyTac and it was really good. That isn’t a surprise coming from those guys. They are as serious about this as any group out there.

And I didn’t include my handload data in this muzzle velocity data, because it probably isn’t helpful. Most of my loads that I use for PRS and NRL-style matches and distances run 8-11 fps SD’s. For ELR (distances at 2,000+ yards) I get a little more OCD, and like to see it below 8 fps. The 300 Norma Mag that I used in the Nightforce ELR Steel Challenge last year had SD’s around 5 fps. The photo below shows a 36-shot string that I shot while practicing one day before that match. It was 36 consecutive shots (video to prove it), which is a huge sample size and I’ve never heard of something that small over that large of a string. I was pretty proud of that!

But, that was expensive. I was using Lapua brass and Hornady A-Tip bullets (plus really high-end reloading equipment) – and the time I spent loading and double-checking everything was excessive and only worth it if you are shooting ELR competitively, in my opinion.

Thanks for the thoughtful comments, Charlie! I appreciate you chiming in.

Thanks,

Cal

Thank you sir! Do u have a patreon so we could send you some donations?

Thanks, Brandon. I do have a way to donate through PayPal. Here is a link:

Thanks,

Cal

Cal

Congratulations on the article, extremely rich in details and information.

Previously it would be possible to choose an ammunition taking into account the chamber and barrel or just testing in practice.

It would be possible to inform the Twist Rate of the rifles used in the test.

I am waiting for the next articles.

Greetings from Brazil

Hey, Humberto. Thanks, buddy. Glad you found this interesting. Good question on the twist rate. Both rifles were 1:8 twist.

Thanks,

Cal

Regarding your last “enlighten us all” statement, there are two issues that could be considered for further investigation, (our data currently being little more than anecdotal) so any research you have done in these areas that we might have missed would be gratefully received.

The first is; it would be interesting to investigate the relationship between powder charge consistency and case capacity. Brass weight alone appears to only have a loose correlation to capacity consistency in our investigations.

This is obviously outside the scope of factory ammunition assessment, but suspect it to be of significant relevance to reloaders.

The second, and way more difficult to assess is brass “qualities”, such as ductility, etc. (yes, we’ve read Litz’s work)

Given the challenges of brass science, this is likely to be outside the scope of ‘shooter investigation’, but we will all have our opinions on that one just the same.

It’s a long, long rabbit hole, but we believe the relationship between powder charge and case capacity consistency to be of greater value than many might think.

Thanks for your work Cal.

Very interesting thought, Steve. I have considered a brass test where I bought 100 pieces of brass from a bunch of manufacturers in the same cartridge, and then tested a few identical loads in each of them to see what produced the most consistent muzzle velocities. I had even thought about sending a few cases of each off to a metallurgy company that could analyze the pieces and give me back some info like what you mentioned. I even thought the Annealing Made Perfect guys might could help, because they have tested a ton of brass over the years and looked at a lot brass under a microscope. They have definitely taken the amateur annealing process most of us were doing to another level based on sound science and engineering. They have a few good articles on their website, including this one: https://www.ampannealing.com/about-brass-hardness/

So it’s an interesting idea. I think a really thorough study on 10+ reloading variables where you change one variable at a time and measure groups and MV would be very interesting – and time-consuming. But maybe then you could do some regression analysis to try to understand relationships between variables. You’d need to do it over multiple cartridges and it’d be hard to control for everything, so the sample size would have to be large – which means it could get giant quickly. It might take several years to complete, but maybe one day I’ll start on it and publish it as I go. I wish there was a way to crowdsource a project like that so we could all contribute, but I’m not sure how you’d control for that. If you ever get the itch to do it, I’d love to see it. If not, maybe I’ll dive into it one day. Either way, it’s an interesting idea! I appreciate the comment.

Thanks,

Cal

Cal, I have not read tonight’s post yet, and can’t wait, but I am a little confused. I see this is part 3 and on May 11 I read what is now called Part 2. What was part 1?

Hey, Jay. It is confusing! Part 1 was published before the Statistics for Shooters series. Honestly, I was writing this content and thought, “Man, a lot of people are going to struggle to understand this if they don’t understand a little about statistics first.” For example, most shooters talk about ES when it comes to velocity more than SD … but that actually is a far weaker measurement and not what I wanted to rank these by. When you average it over four 10-shot strings the order of ES and SD become about the same, but SD is a far better stat when it comes to quantifying how spread out data is. There was also some things related to group size, like relying on mean radius instead of ES for some of the same reasons. So after I did the first post, I decided to write the Statistics for Shooters series instead of trying to explain all that stuff along with the results. Those articles would have gotten so long … in fact I originally started writing this article that way and it was like 30+ pages long in Word … with narrow margins.

So, I consider Part 1 as the Intro to this field test and the reader poll that I did. That was published Oct 24, 2020. I know that was a long time ago. The other delay in publishing this stuff was the fact that you couldn’t find any of this ammo for any price for months. I figured publishing it when you couldn’t actually find any of this stuff wasn’t helpful, so I waited until some of it started coming back in stock a couple of weeks ago. But now I’m on it, and hope to have it all published over the next several weeks.

Here are links to all the posts so far:

Part 1: Intro & Reader Poll – Cast Your Vote For Which Will Perform Best

Past 2: Round-To-Round Consistency For Physical Measurements

Part 3: Live-Fire Muzzle Velocity & Consistency Summary (this article)

Part 4: Live-Fire Muzzle Velocity Details By Ammo Type (plan to publish tomorrow, 5/28/21)

Part 5: Live-Fire Group Sizes & Precision

Part 6: Overall Performance & Long-Range Hit Probability

Thanks,

Cal

Thanks for the response. I had read Part 1 but did not connect that as Part 1 when I started reading Part 2 or 3. In fact, “part 1” got me into reading your blog. I had finally purchased a RPR in 6.5CM and then ran across your Part 1 while surfing for long range shooting. I have since read back a couple of years and now forward through Part 2. I will soon be asking questions on that once I get to a second reading.

Thanks for all your thorough explanation of the details. I look forward to then reading Parts 3 and 4 ( a lot of catching up to do.)

Thanks, Jay

Cal — again, thanks for the time and financial commitment you made for this effort; truly some interesting data.

I was able to find my Winchester Match Ammo 21-shot Labradar-recorded data from May 2017. At the time I was somewhat shocked at the ES/SD, though it grouped well at 100-yards and I was able to get on-target shooting at a 1-MOA steel plate at a 1,000 yards in 3 shots [being a newbie at that distance].

Here is my data:

Average: 2720.29 fps

Highest: 2790.62 fps

Lowest: 2656.61 fps

Ext. Spread: 134.01 fps

Std. Dev: 32.67 fps

It is interesting to see how closely my average muzzle velocity data [2,720] matched your data [2,719], without considering any equipment or environmental differences.

That is very interesting. I’m glad you were able to hit a 1 MOA target in 3 shots, but with an SD of 32 and ES of 134 fps … you shouldn’t count on being able to hit a target that small at long range on demand. I ran the analysis on it using the Applied Ballistics Weapon Employment Zone (WEZ) analysis (learn more about that here), and it looks like if we assume that your firing solution was absolutely perfect and that you broke the shot perfectly and that the rifle you were shooting could hold 0.3 MOA “all day” … then you’d have a 15.5% hit probability on that target. That means over the long haul we would expect to hit 1 time out of every 6 shots – at least on average.

I just say that so that anyone reading this understands that having an ES of 134 and SD of 32.7 fps makes it a crap shoot at hitting small targets at distance. It can happen, as it did for you – but it’s a low percentage shot.

It is very interesting to see that the average muzzle velocity was so similar. I wouldn’t expect it to be far off unless you were using a lot different barrel length than the 22 and 24 inch barrels I tested with, but that is surprisingly similar.

I appreciate you sharing.

Thanks,

Cal

A little off topic but still related. I am struggling with an inexpensive bolt action rifle. ES/SD gets into the poor range (from even worse than that) with only a few loads. I have tried a couple dozen different loads. I am using Lapua brass, an electronic powder measure that is always calibrated, and good quality bullets. Neck tension is set using a mandrel. Using the same reloading equipment and techniques my Ruger Precision Rifle gets midrange single digit SD’s,

So my question is, do you need to start out with a good quality rifle? I suspect but don’t know that an investment in a new barrel and stock would go a long way in solving the SD issue.

Great question, Daniel. The short answer is yes. If you are using the same loading techniques as what get’s you into single digit SD’s in other rifles, then I’d say it’s not a problem with the load. Even with the best ammo in the world, some rifles just won’t shoot. There are occasionally even rifles with custom barrels that for whatever reason won’t shoot. I have a friend who is a gunsmith that 100% focuses on precision rifles and only buys barrels from one of the top manufacturers and last week he told me that last year he chambered 2,996 barrels and 13 of those were bad barrels that just wouldn’t shoot. His process is 100% automated with CNC machines, so it’s unlikely those were bad gunsmithing. Just for whatever reason, the steel in the barrel or some other mechanical nuance within the barrel caused them to throw bullets inconsistently. The only thing to do in those moments is to throw the barrel away and chamber a new one. That might be the case for you too. I’d definitely say that it doesn’t sound like the load is the issue.

I have high-end loading equipment that is the best of the best and have taken classes on loading and read books and have a lot of real-world experience, but I doubt I could get my first rifle (a Rem 700 chambered in 270 Win) that I bought at a pawn shop in the early 90’s for $300-400 to shoot any handloads I could come up and have SD’s in the single digits. The barrel wasn’t great quality to start with, but at this point I would suspect it has a ton of pits and cracking and the lands might be missing for the first few inches of the barrel. So it’s not a matter of effort or expertise – it’s just the equipment.

I would suspect if you replace the barrel with a custom barrel your SD’s would be similar to the RPR you mentioned. I think it’s unlikely the stock has anything to do with the SD’s (although I could think of ways it could plausibly affect it), but I think replacing the stock might have a bigger impact on group sizes.

Thanks,

Cal

Thanks for the reply Cal. What you’ve told me more than validates my thinking. The rifle in question is a Ruger American Predator in 223. The barrel already suffers from a short leade, so I can’t even load to SAAMI spec COL’s ( I actually use CBTO). Surprisingly have one load that will get sub-MOA reliably.

Thanks again.

Hello again Cal, I was just reading the last article for about the 5th time when what should pop up in my inbox but the latest instalment! Happy Days!! Thanks again for such a meticulous presentation. I really enjoy the paradoxes where certain brands ace it in one aspect and then fall flat on their face in another. I honestly thought that Nosler would have done better because they market there products as being high end and therefore worthy of high end prices. It was interesting to see Federal knock it out of the park when most .308 shooters know that their GMM ammo in that calibre is pretty much seen as the gold standard for factory match ammo. It says a lot about their manufacturing process and quality control. I am 2/3 of the way into Modern Ballistics Vol.2 and thanks to PRB and Mr Litz I have become passionate about precision shooting and reloading and hope to start performing my own experiments in the pursuit of the truth. You are an inspiration and I’ll look forward greatly to the next instalment. Kindest regards, S.

Thanks, Stephen. You sound as passionate about this stuff as I am! Watch out, because this is a rabbit hole – but it’s fun to explore!

Thanks,

Cal

3 lab radars! That’s sweet! Do you mind sharing the settings you had for the lab radars? Did you have any crazy results? Like velocity’s getting higher as you got farther from the lab radar?

Amazing series! Keep up the good work!

Hey, Andy. Yes sir. I had a couple of friends who also owned LabRadars, so I borrowed them for this research. The settings weren’t anything special, and I didn’t experiment with the LabRadars to see if different distances changed the readings. I simply measured the offset distance from the muzzle to the side of the device and duplicated that same distance each time I set up for the test (it took several days at the range to gather all this data). I can’t remember exactly what I ran, since it’s been almost a year since I gathered the live-fire data. I think it was 12″ but it may have been 18″.

I was careful about the fundamental/obvious stuff like making sure they were aimed directly at the target, and that they were all aligned with the muzzle.

I didn’t really have any crazy results or issues. I used an SD card to save all the results, which means the LabRadar will log the detailed readings of each shot in a way you can view on a computer. The first time I set up I ran through a few exercises and popped out the SD card and looked at it on a computer to ensure I was getting good readings and the signal-to-noise ratio was good (meaning there wasn’t interference). I did follow their recommendations on configuring the frequencies of each of the devices so that they were spaced out. I just put one on the lowest frequency, one on the highest, and the other in the middle between those two. That gave 4-5 channels of separation between them.

Honestly, I didn’t have to tune anything. The LabRadar’s all worked pretty well right off the start.

The only tip I can give with the LabRadar after using it for thousands of rounds over the years is that you should mount it on a solid tripod. I originally bought their little benchtop flat stand, and it didn’t hold the radar steady enough. That would give me some weird readings occasionally. When I switched to mounting it on top of a tripod that all went away. So you can see in this test they were all mounted on high-end ($1,000+) tripods. They were absolutely rock-solid. I just went over to their website to see what they called that tabletop mount that I originally bought but noticed they don’t seem to offer that any longer. Smart move. They probably noticed the same thing and stopped selling it.

Hope that helps!

Thanks,

Cal

This is an amazing effort Cal. Thank you for doing this. I am a hand-loader, so it does not really help me on an everyday basis, but I rarely see such professional level of research done in this field. As a researcher (practicing physicist) I appreciate your attention to detail and explanation of the associated shortcomings of the statistics involved in this effort. I just donated to your PayPal account as a token of my appreciation.

Thanks, Christos! That means a ton coming from a professional researcher. I appreciate the support.

Thanks,

Cal

Have you any concern with fouling effects on accuracy changing manufactures and not cleaning in your 30 round reps.

No, I’m not concerned with that. I think that detail is way “in the noise.” But, that is just my personal opinion. I have talked to a few professional Benchrest shooters over the years about their cleaning regimen in detail, and I feel like they’d say the same thing.

Thanks,

Cal

Did you shoot 3 different types of ammo (3 x 10 shot strings for 30 rounds total.) between cleaning?

I remember in Top-Grade Ammo (Zediker) talking about getting different velocities because of differences in how the propellants fouled the barrel. He would shoot a 10 round string with ammo containing propellant A followed by 10 shot string with the ammo with propellant B one day and then propellant B followed by A the next day. He found that the second string shot with the 2nd propellant of the day had a larger dispersion for the first 5 or 6 shots.

Did you notice differences in the dispersion in the beginning of your 10 shot strings when you changed between types of ammo for strings 2 and 3?

That is a great question, and one I bet others will have when I post the next article. So, I wanted to try to give you a thoughtful answer.

Yes, that is how I did it (Clean > 4 fouling shots > 3 different types of ammo x 10 shot strings > Clean). Top-Grade Ammo is a great book. I’ve read it cover-to-cover, along with a couple of other books that Glen Zediker wrote, and I remember the part you’re talking about. Glen made huge contributions to the shooting community. I personally think Top-Grade Ammo might be the best reloading book out there right now. Having said that, I don’t agree with everything that Zediker proposes in that book. Much of it is based on his anecdotal experience or advice from David Tubb, but not always based on rigorous research. I’m not saying it isn’t helpful (I’ve literally got highlights and notes written all over my book), but that doesn’t mean I believe everything he presents is the unquestionable truth or even meaningful in a measurable or repeatable way. Simply put, I have some “professional skepticism.”

For example, just a couple of pages before that part you’re referencing Zediker wrote, “I recommend one of the Shooting Chrony models … You will wonder if your chronograph is accurate, no matter how much you paid. Unless you see a crazy reading, it’s accurate.” (p 269) Well, it turns out when Bryan Litz did rigorous, professional-grade research on that subject that simply isn’t true. The research showed that the Shooting Chrony model had an average error in velocity measurement of 20.05 fps! I’m honestly not trying to be critical of Glen, but I just wanted to point out that he hadn’t done the research to support his recommendation – it was just based on his anecdotal experience. And when real research was done on that topic, it turns out his advice was wrong.

I went and re-read the part you are referencing (p 273), and I had originally even highlighted parts of it. He did fire a couple of 10-shot groups, but he didn’t offer any of the data and I’m skeptical that the sample size was adequate to draw meaningful conclusions. I also didn’t hear any statistical analysis. Glen talks about “looking for the worst group” and it seems like he is just making visual comparisons between targets and not necessarily calculating things like the mean radius of groups (read about the most effective way to analyze group dispersion). I also didn’t hear about confidence intervals or sample sizes. I’m not saying that he didn’t see patterns like he described, but I think it could be anecdotal evidence and easily fit into this scenario:

Again, I don’t want to be critical of Glen Zediker. I personally know how much time goes into writing quality content on this kind of topic, and I have the utmost respect for his contributions to the shooting community. He’s honestly one of my favorite authors on the topic of rifle shooting, and I own and have enjoyed a few of his books. But, I don’t believe that had a meaningful impact on group dispersion in my test – at least not on the size groups we’ll be looking at. I could see it being plausible if you’re shooting Benchrest and your groups are in the 0’s or 1’s then maybe you should worry about that, but this ammo is not capable of that kind of precision. There also might be niches like rimfire that it could also be meaningful for, but that’s not my application. In this research, I didn’t see any meaningful variance in the 2nd or 3rd groups like you were asking about.

Maybe one day I’ll do some rigorous research on the topic and we’ll see if the empirical data supports that or not. It would require a significant sample size of strings of shots, and not just changing powders but testing over multiple types of powders but also testing over multiple powder charge weights to ensure you weren’t seeing nuances of particular loads. I haven’t done the planning or added it up, but I’d suspect it would easily take at least 1000 rounds and very carefully planned testing to draw meaningful conclusions – and it didn’t sound like Glen did anything close to that.

Again, great question!

Thanks,

Cal

Thank you so much! I have taken a notebook to that book as well. I also have multiple Litz books including volume 2 with your barrel tests. The only reason I have the books I do is because of book recommendations on your blog. I reviewed by notes from Litz books and I could not find any tests on the effects of different powdering fouling on MV. I was hoping this would provide a meaningful conclusion to that observation in Top-Grade ammo and it has.

Thank you!

Cal, I read everyone of your posts the day they come out, learn a lot, fantastic. I’ve been shooting for 60 years, handload, get out once per week, but don’t comment as I am a rookie compared to your readers. However, I live in the cattle country of Montana. “Big Hat-No Cattle” is a saying I hear/use a few times per month. It refers to someone acting like a hot shot cowboy but isn’t, a blow hard that doesn’t know what they are talking about, they wear a big cowboy hat but don’t know a cow from an elk. This is absolutely not a comment on Charlie just a fun fact.

Hey, Bob. I appreciate you leaving a comment. It doesn’t sound like you’re a rookie! That is a funny saying. I hadn’t ever heard it before the comments here. I’m going to have to use that one.

Thanks,

Cal

Well – as there are generations of ranchers in New Mexico the “Big Hat” line always draws a chuckle.

MAKING fun of myself as I have all this ELR gear and if I was not proactive I would have no rounds to put downrange – just a paperweight.

As most are aware components are in short supply and particularly with powder almost non- existent. Vihtavuori – hard to find anyway in the best of circumstances

But allvv V in good jest