Are you one of those guys who has been reading this series of posts on bullet jump, and thinking to yourself, “Well, my 0.020” bullet jump sure seems to be working fine. Doubt this would be any improvement over what I’ve already got!” This is the post for you!

As Mark started sharing some of his bullet jump findings with a few shooters, he met some skepticism – even from sponsored shooters on his Short Action Customs team. Here is how Mark tells one of those stories:

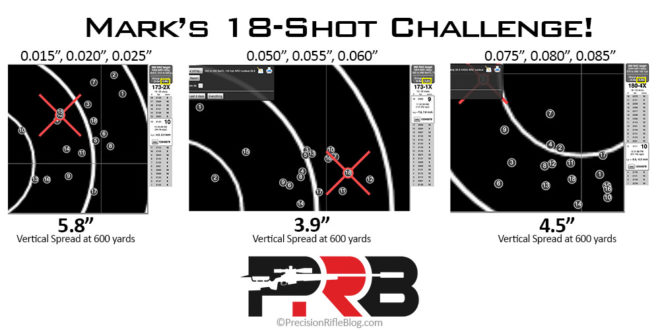

“After we’d already done most of this bullet research, we had Solomon from our shooting team fly out for a local match, and he helped with some bullet jump testing. Solomon was dead set on Berger 105’s performing best when jumping 0.020″. So, we shot his load over our electronic target system at 600 yards, and it did shoot extremely well. I struggled to convince Solomon that there might be a better jump, especially with how good the 0.020” was already shooting for him. So, I was forced to dig a little deeper and formulate a test that would compare the bullet jumps in a ‘realistic’ range of jumps someone might experience as their barrel wears during a match and/or the jump tolerance they might experience with factory ammo.

I ended up creating a simple test where we shot three groups at 600 yards. Each group consisted of 18 total shots fired: 6 shots with a 0.015″ jump, 6 shots at 0.020″ jump, and 6 shots with a 0.025″ jump, shot in a round-robin fashion. Then we cleaned the bore, fouled the barrel with a couple of shots, and performed an 18-shot test for jumps at 0.050, 0.055, and 0.060 inches. Finally, we repeated the full cycle and finished with an 18-shot test for jumps at 0.075, 0.080, and 0.085 inches.”

Mark’s 18-Shot Jump Test Method

Mark said this was originally a 15-shot challenge, where they shot 5 shots at each jump. But they eventually changed it to an 18-shot challenge by adding a 6th shot to each group because a slightly larger sample size was helpful to prevent false-positives and ensure they could have full confidence in the results.

For each jump, you’ll test a 0.010” wide window of bullet jumps. 0.010” is about much the lands of a barrel would likely erode over 200 rounds for most mid-sized cartridges that are common in precision rifle competitions (like Creedmoor, Dasher, or x47 Lapua cartridges). So unless you are going to adjust your seating depth every 100 rounds or less, you likely need to find a range of jumps that is at least that wide and provides a similar vertical point of impact (POI) across the entire range. That is what this challenge is designed to inform you about.

There are 18 shots per group, and you’ll end up firing 3 groups – for a total of 54 shots. But, at the end of those 54 shots, Mark feels like you’ll have hard data on whether your shorter jump is really better in realistic scenarios. Here are the groups and number of shots Mark recommends:

- Group #1: 0.015-0.025” Bullet Jumps

- 6 shots at 0.015”

- 6 shots at 0.020”

- 6 shots at 0.025”

- Group #2: 0.050-0.060” Bullet Jumps

- 6 shots at 0.050”

- 6 shots at 0.055”

- 6 shots at 0.060”

- Group #3: 0.075-0.085” Bullet Jumps

- 6 shots at 0.075”

- 6 shots at 0.080”

- 6 shots at 0.085”

Of course, if you’re current load uses a 0.010” jump, you could change Group #1 to be 0.005-0.015” or 0.010-0.020” to test how “durable” or consistent your current load is a 0.010” range of bullet jumps that you will likely experience, if you don’t adjust seating depth every 100 rounds or less.

Within each group, Mark fires the shots round-robin style. For example, in Group #1, they’d fire one shot with a 0.015” jump, then one shot with a 0.020” jump, then one shot at 0.025” – then they’d repeat until all 6 shots are fired at each bullet jump. Then they’d clean the barrel, foul the bore with a couple of shots, and repeat the tests for Group #2, and so on.

Two important notes before you do this:

- You must carefully measure the distance to the lands on your barrel with an accurate and repeatable method. How most shooters do that is not precise enough to be within +/- 0.010” – I know that was true for me. Click here to learn about the only two methods I know of that are repeatable to +/- 0.002” or less. If you don’t do this, you likely won’t be testing what you think you are.

- Adjusting your seating depth will affect your chamber pressure, so, as always, you should be careful when changing a load and watch for signs of excessive pressure. The Sierra Reloading Manual says that adjusting seating depth to match your rifle’s throat/freebore and maximize accuracy “is fine, but bear in mind that deeper seating reduces the capacity of the case, which in turn raises pressures. Going the other way, seating a bullet out to the point that it actually jams into the rifling will also raise pressures.”

Results from A Few 18-Shot Challenges

We wanted to share a few of the results from these types of challenges that Mark has already collected using his electronic target system at 600 yards.

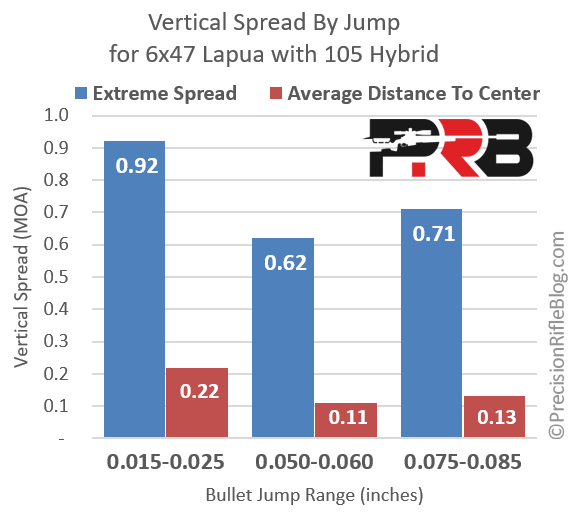

6×47 Lapua with Berger 105 Hybrid

Below are the 3 targets from this challenge with an 18-shot group on each one. I added a callout beneath each target for the vertical extreme spread that was measured for each group by the electronic target system at 600 yards (also highlighted in the yellow box on the readout).

The Problem with Extreme Spread

While measuring the extreme spread of a group is very easy to do and useful, one of the problems of only looking at extreme spread of the group is that it really only takes two shots into consideration – namely the two that are furthest apart. Where the other 16 shots hit in no way affects the extreme spread, which means we’re ignoring 88% of the shots fired! For example, if you look at the target above on the left you can see shots are evenly distributed vertically. However, the target in the middle has most shots in a narrow vertical window, except for one outlier, namely shot #1. In fact, if you excluded shot #1 from the middle target the extreme spread would drop from 3.9” to just 2.1”. Now cherry-picking data to exclude is the exact opposite of good science, so I’m certainly not suggesting we do that. But it goes to show how drastically an extreme spread can change based on the placement of a single shot. It simply doesn’t take advantage of the full sample size.

Bryan Litz discussed this topic at length in Modern Advancements for Long Range Shooting Volume II. Bryan carefully explains this in Chapter 1 of that book, but here an excerpt with his recommendation:

“Measuring the extreme spread of shot groups is quick and easy, but it’s not actually a very good measure of dispersion. What do I mean by a good measure? A good measure should give you useful information, which is information you can use to make good decisions. When you look at the extreme spread of a 5-shot group, that measurement is determined by only 2 out of the 5 shots. In other words, only 40% of the shots are considered in the measurement. Even worse, for a 10-shot group, a center-to-center measurement is only using information from 20% of the total shots. Since the extreme spread, center-to-center measurement, is determined by only a small portion of the total shots available, it’s just sort of an indicator of precision. There are many alternative measures of precision. You could measure the location of each shot and calculate vertical and horizontal standard deviation (SD), radial standard deviation (RSD), circular error probable (CEP), etc. You could get really carried away with statistical methods of characterizing precision. Taking a step back and considering options for measuring dispersion; we want something more descriptive than extreme spread, but don’t want to go crazy with statistics. It’s my opinion that the mean radius of a shot group is a well-suited measurement for this task. Mean radius, also known as average to center is self-explanatory; it’s the average distance from each shot to the center of the group.” – Bryan Litz

Average distance to center (ATC) takes all the shots into consideration, and therefore is more representative of the entire group and not simply the two shots that fell furthest apart. It’s not that extreme spread isn’t useful. There are shortcomings to ATC as well. You could have two groups with the same ATC, but one has most shots tight and one flier way out of the group and the other group is more well-rounded without a flyer, but the primary shot cluster is slightly larger. In this case, the ATC was identical, but the ES was different. So, I’m certainly not claiming ES is irrelevant or not useful. While ATC does include all shots fired in the calculation, maybe we should analyze both ATC and ES when looking at groups to keep a balanced perspective.

ATC is more difficult to measure and calculate by hand, but software like the OnTarget Shooting app can analyze your target and provide ATC and ES for you, along with other stats.

The chart below shows the equivalent data for vertical extreme spread taken from the targets above and converted to Minute of Angle (MOA), instead of inches at 600 yards. It also shows the average distance to center (ATC) that Bryan suggested as a more complete or representative measure of the dispersion of a group.

You can see 0.050-0.060” bullet jumps resulted in the smallest extreme spread. In fact, the ES for the shorter jump (0.015-0.025”) turned out to be 48% larger, and the average distance to center between those is 100% larger! The 0.050-0.060” bullet jumps clearly produced the most consistent vertical at long range in this test.

6BRA with Berger 105 Hybrid

Mark also ran this test with a rifle chambered in 6mm BR Ackley Improved (a.k.a. 6BRA) firing Berger 105 gr. Hybrid bullets at the same jump ranges: 0.015-0.025, 0.050-0.060, and 0.075-0.085. Let’s take a look at those results:

You can see the group with the largest jump (0.075-0.085”) produced the smallest extreme spread. The shortest jump had the largest ES at 0.84 MOA, which is 47% larger than the 0.57 MOA ES achieved with the 0.075-0.085” jumps. When it comes to ATC, all three groups were very similar. If the ATC is similar but the ES is not that means there must have been a few significant fliers in the shorter jumps, but the other shots must have clustered reasonably close together to still achieve a low ATC. On the other hand, the lower ES of the farthest jumps means it didn’t have fliers as significant, but the main cluster must have been slightly larger for the ATC to end up the same. Overall, the load with the 0.075-0.085” seems to be the best overall performer for this rifle.

6×47 Lapua with Berger 115 DTAC

Finally, Mark did a similar 18-shot challenge at 3 different bullet jump ranges with David Tubb’s 115 gr. DTAC RBT Closed Nose bullet. For the middle range on this challenge, Mark chose to test 0.030-0.040” instead of 0.050-0.060” that was used on the prior two tests. Here are the results:

On this one, it looks like the shortest jump actually had the smallest extreme spread – although it was only by 0.01 MOA! Obviously that middle bullet jump range of 0.030-0.040” had a very similar extreme spread, but it also had the lowest ATC of any of the groups. So, if I were trying to pick between these jumps, I’d go with the middle group because the ATC is 13% smaller than the 0.015-0.025” results, even though the ES is 2% bigger. Once again, the Average Distance To Center (ATC) takes all 18 shots into consideration, while the Extreme Spread (ES) only takes 2 of the 18 shots into consideration when doing the calculation. So, if you’re going to weight one metric more than the other, I’d suggest ATC.

I do have to wonder if this test for the 115 gr. DTAC would have favored a 0.040-0.050” jump range, if they’d have tried that for the middle range. I went back to the bullet jump research data for the 115 DTAC, and the chart below shows where the sweet spots were over the 6 rifles Mark collected data over:

You can see that 0.030-0.040” had an average vertical spread of 0.62 MOA over the 6 rifles that are represented in the data above, which is EXACTLY the same as the results in this 18-shot challenge. Isn’t that crazy?! The average over the 18 shots fired here with one rifle and the average over the 6 rifles tested with 3 shots in that range each, which we covered in the previous post, both ended up being EXACTLY 0.62 MOA! While the fact that they are identical to the hundredths place is likely a coincidence, it gives me a little more confidence in the how repeatable and reliable this data is. You can see above that it was 0.040-0.050” that produced the smallest vertical ES at just 0.31 MOA at 600 yards. I wonder if that would have ended up being the winner if they’d done an 18-shot challenge for that range of jumps instead. Of course, they may not have had all that data when they originally did this 18-shot challenge, since this testing has been a progressive thing over more than two years. If I were using 115 DTAC’s, I might try out that 0.040-0.050” range of bullet jumps.

The Challenge & Up Next

If you’re one of those guys who already has a great load, but it uses 0.020” of jump or less, Mark wants to challenge you to go run this test for yourself. After 54 rounds, he bets you might find something that works better than what you’ve been using. That has been the case each time he’s ran through these tests with other shooters! With COVID-19 stay-at-home orders in most places and rifle matches canceled, maybe it is a good time to try to tune on your load to be ready when things start back up!

Up next, I’ll wrap up this whole series and offer a few suggestions on how to integrate bullet jump into your load development process. While the 18-shot challenge is a good way to test a couple of further bullet jumps against your current load, what is the best way to go about this to develop a load from scratch? If we now have another variable we need to tune in load development, how do I find a good load without having to shoot 100+ rounds doing load development? Do I have to test every 0.005” to find the best bullet jump? What order should I do this in, powder charge or bullet jump first? Great questions! In the next post, I’ll share some personal suggestions and advice from other top shooters in the next post to try to help you get to a good load in the fewest rounds possible.

| Find this interesting? | ||

| Subscribe Be the first to know when the next article is published. Sign-up to get an email about new posts. |

or … |

Share On Facebook |

PrecisionRifleBlog.com A DATA-DRIVEN Approach to Precision Rifles, Optics & Gear

PrecisionRifleBlog.com A DATA-DRIVEN Approach to Precision Rifles, Optics & Gear

Just to confuse things, any thoughts on the subject of big calibers – such as those used in KO2M ? Has anyone started down this path of research?

Charlie

I talked to a friend at Accuracy International who has done some tests on bullet jump in a 338 Lapua Magnum, and after extensive testing and research for their ASR contract submittal they also found that longer jumps gave improved performance. I will share more details about that in the next post, so stay tuned. I know that isn’t big bore (i.e. 375 caliber or larger), but that is the only thing I’ve heard about this kind of testing on magnum rifles. I do know one really well-respected gunsmith who uses extended freebore in 7mm SAUM chambers too, but again that is isn’t a big bore like it seems like you are asking about.

Anyone else know of any research like this on big bore calibers?

Thanks,

Cal

Conditions will have more to do with group size than bullet jump.

Nowhere did I see what the conditions were in the entire piece.

Very detailed subject and well thought out in theory but need to be conducted in really well monitored and recorded conditions as to identify outside affects. If conducted on another day the results would be entirely different.

I’ve got to agree with Frank on this one, but with a slightly different angle.

I mean this with no offense to the shooter, because shooting 18 shot groups is -hard-, which is why you don’t see internet heros posting their .2MOA 18 shot groups all the time!

Without a “control” study that shows Mark can shoot both smaller, and consistently smaller (less variation), 18 shot groups at a single seating depth. How do we know that his personal variance isn’t from .11-.22 (mean radius?). BTW anything down in the .1xx mean radius for that many shots is pretty impressive, but the flier that are affecting the ES simply could be “bad shots”by Mark still.

I’m looking forward to Mark’s 3×18 shot test showing at his “best” seating depth his groups are smaller and more consistent!

Good points. There are virtually no experiments that have a perfect control, but I still think you can learn something from this and the results are valid. Even if he threw a couple of shots in each one, the Average Distance To Center (ATC) would aggregate over those, and it wouldn’t affect it significantly with such a large sample size. That’s why I took the time to calculate and included that metric for this analysis.

Ultimately you have to trust that the researcher didn’t do things to bias the results. I actually got to know Mark enough through conversations over 2 years that I really believe he is simply in search of the truth and not trying to convince people of something … otherwise, I wouldn’t have published his results. He’s tried to be as careful as possible in doing the experiments and has been EXTREMELY open to changing the process in any way possible to make it more reliable or trustworthy. At the end of the day, I still think there is something here for me to learn from and I plan to try it for myself. If you don’t think so … no harm! You get a full refund for all the time and money you spent on this. 😉

Thanks,

Cal

” BTW anything down in the .1xx mean radius for that many shots is pretty impressive, but the flier that are affecting the ES simply could be ‘bad shots’ by Mark”

…or windage from the terrain, or lighting, etc. There’s a reason F-class and BR guys shoot with higher magnification rifle scopes, wind flags, and spotting scopes to eak out every last little edge on that sort of thing. Even then, it’s still a little subjective – one person might not be able to ‘see’ what someone else can.

I don’t disagree with you; getting down into that fine of a difference between A & B, or B & C, you start running into whether the test in question has the ‘power’ (in terms of sample size) to actually resolve the difference being observed, or whether it’s possibly just random noise that would swing the other way if you replicated the exact same test another day.

But… a lot of what we do in load testing is a lot closer to ‘exploratory data analysis’ (EDA) than it is to ‘clinical testing’. We’re looking for something of interest, with minimal outlay of time/effort/components. At some point an individual has to decide if what they see is a good enough argument for their purposes.

Yep. That’s why I also included the Average Distance To Center (ATC) on the analysis here. When you have a sample size of 18, you want to take advantage of that and looking at ES and ATC keeps us from being blown over by something that could have potentially been a poorly placed shot. What you see in the analysis though is that ES and ATC were never drastically different … which I believe statistically speaks to the fact that Mark was consistent in his shot placement, at least to a large degree. It’s not like one had a huge ATC and extremely low ES, or vice versa.

As far as “whether it’s possibly just random noise” … that is a great question, and where I personally went when I started looking at this data. I’m not sure if you noticed this in the post before last, but Mark actually hired a professional statistician to answer that question. That’s how serious he is about this! Here is what he said about that:

So, that seems like the answer is a pretty clear no … at least from a statistical perspective. I don’t want to overstate that, because I do personally believe that having a healthy level of skepticism is a good thing. But, I’ll just say that I’m not sure what you could have done in any practical terms to improve on the data collected. Ultimately, I’m extremely appreciative of Mark being willing to put all this time in, and then share the results with all the rest of us. I absolutely wouldn’t say it is “minimal outlay of time/effort/components.” I’d encourage you to go do experiments like this for yourself, and see if you’d call it “minimal”! Mark has redone some of these tests multiple times over multiple years. He’d learn a better way, and go back and do them all again. Any real research takes far more time than most people would think.

From this point forward, any time I do load development, I plan to try this out for myself. I’m not saying I’m going to blindly start loading everything at 0.060″ jump … but I’m convinced there is probably something here, so I’ll see what my rifles say the truth of it is. Ultimately, you don’t have to do it. Dismiss it and move it. I personally think that is shortsighted, but I won’t be offended if you are still too skeptical to give it a shot for yourself. That won’t affect my groups one bit! 😉

Thanks,

Cal

One of the best things you can do is to learn what is called DOE, or design of experiments, in order to avoid bias and waste. In our instance, conditions are a valid concern, but the test would have to be run round robin over the whole range to eliminate the concern.

Once the three smaller spreads have been narrowed, I see no reason one couldn’t pick the middle of those ranges, to extend the round robin over the larger seating depth spreads. So rather than 18 samples over each short spread, just 6 at the mid points, with the steps going from 20, to 55, to 80 in the above example. This way the round robin spreads the condition over the whole test duration. That, combined with repeating the test with another operator on another day, should answer the theory before the bbl wear or operator fatigue became an issue. But there I go spending other people’s money…

Good points, Dino. There is an art to controlling for experiments like this. I think your suggestions are good ones. But, like you said … spending other people’s money. 😉 Personally, I don’t think the results would be different in any meaningful way. A big part of that is, like you said, 18 shots is such a huge sample size and likely larger than you really need. However, a large sample size certainly helps separate the signal from the noise. I don’t know of any statistician that complained about the sample size being too big! 😉

I appreciate the your thoughts. You are clearly a sharp guy. Is there any good books you’d recommend on Design of Experiments? My engineer brain seems to naturally think this way, but I’ve never had any formal training on it. I guess I’ve just read about a lot of experiments. But I’d love to find any good materials on it, if you know of any. Always looking to learn!

Thanks,

Cal

Frank, I somewhat disagree. Yes, environmental is a huge factor, but I believe this data collected by Mark is legit and accurate. In my opinion, it is a collection of data that shows a trend from large samples across a wide range of seating depths. Even with environmental differences, the trend is still shown. Just my thoughts.

Totally agree, Chris! Thanks for chiming in.

I’m not sure that matches my experience, Frank. I’ve never found a good load, and then weather or environmental conditions made it shoot vastly different. I actually don’t agree that “the results would be entirely different,” but everyone is entitled to an opinion.

Thanks,

Cal

If Marks shots were all on the same day under the same conditions, they are valid. In my experience, changing environmental conditions only change my group impact point, not the group size. Changing winds over long range do impact my group size, air density does not.

Totally agree! That fits my experience as well. Drastically different winds can change elevation (aerodynamic jump), but as long as all shots in a group were fired in a reasonable amount of time and it wasn’t an extremely gusty day with the wind drastically changing direction in the middle of the group … we can have confidence the results weren’t significantly affected by atmospherics.

Another brilliant article, Cal. I would go farther, though, and say that ES is nearly useless or irrelevant, because the fact that your most “extreme” shots happened to be in a particular sequence that fell within your group is random. What if that “extreme” shot that appears in your group would be the extreme for a 25 shot group also? Or a 3rd group?

The problem with ES is that is had no predictive power. And, as you correctly highlight– the purpose of all this data gathering is support making a conclusion– a prediction. If the data doesn’t support that, it’s pointless.

It’s important to note that CEP and ATC aren’t the same thing. CEP is the *median* error and ATC is the *mean*. Because we are measuring things with a tendency to skew, the median is more informative.

But as a shooter, we really want to have a confidence level higher than 50%. Really, we’d want something more like 90% or 90% (p<0.05, right?). So what's MOST useful IMHO is the 95% confidence value– the 95th percentile containing your vertical dispersion or your MV variation, or whatever it is you are measuring. For MV, I just use two sigma. You can do that for vertical too. But for measuring overall accuracy (wind included) you'd really need a 95% confidence ring. And that is simply the size of the largest ring containing 19/20 shots.

I don't suggest using 20 shots for load development (it's impractical). But if you have two apparently equal loads, it will likely require that many to break the tie. And for the F-class or HP rifle shooter, a 20 shot string is particularly relevant.

Totally, Justin! I hope I didn’t make it seem like ES was irrelevant. I actually went back just before I hit published and added “I’m certainly not claiming ES is irrelevant or not useful” and put that in bold, because I didn’t want to overstate that point. I was just trying to say that it doesn’t capture the whole picture. I think ATC and ES combined are two descriptive ways to capture the essence of the dispersion of a group. If you only look at one or the other, you could potentially be missing an important aspect of that group.

I do agree on your point about the median, but didn’t feel strong enough to go against Bryan’s recommendation for ATC. Also, I know ATC is what OnTarget provides shooters with on their target analysis, so I didn’t want to frustrate readers over an academic “superiority” of a metric they probably wouldn’t have any way to calculate for their own groups. Honestly, it’s likely the differences between mean and median would be subtle for most groups, and it would be exceedingly rare that the two would lead to meaningfully different decisions. At the end of the day, I think there is a lot of wisdom in this quote from Litz:

I agree you can typically make decisions with a smaller sample size, unless the performance is very similar. And in my personal opinion, if performance is similar … just pick one and go practice instead of wasting more barrel life doing load development experiments! In the game I play, tiny differences in two loads isn’t going to lead to drastically different positions on the leader board. That may be true for Benchrest or other disciplines, but I don’t think it is for PRS/NRL or even ELR matches. I would bet my house that the shooter’s ability to read the wind, calculate an accurate firing solution, and break a clean shot are always far more correlated to how you’ll finish than minute differences between one really good load or another.

I do really appreciate your thoughtful comments. You’re clearly a sharp guy, so thanks for taking the time to share your thoughts.

Thanks,

Cal

I apologize in advance for the novel. I’m pretty new to PRS. I’ve really enjoyed your last few articles (well, all your articles!). I don’t reload, so I just shoot factory ammo. I recently purchased an AIAX and was looking at purchasing a factory Win Tac barrel for it. However, I have no idea what the bullet jump will be using factory Hornady 108gr ammo and the Win Tac barrel. In a similar vein of your past “How Much Does it Matter” articles, how much does it matter?

Is the velocity, etc., going to be changing much throughout the life of the barrel where I need to change my dope? Do I need to find a new MV (or something?) every “X” number of rounds? Or is the POI going to be changing due to barrel erosion where I need to be constantly re-zeroing?

Some people have said just to check my velocity with a magnetospeed/Labrador before I shoot a match and run with that. I guess that means I wouldn’t have to buy 1k rounds of the same lot # at a time if I’m constantly tweaking the velocity? However, others, like Dan Jarecke, say they don’t use a chronograph to get their velocity, they true it at the range and plug in their dope values into the kestrel. So obviously they don’t use a chronograph before each match?

I am getting into PRS club matches and I don’t want to be tweaking a lot of data every few times I go out to shoot. Worrying if my MV is off, my POI is off due to bullet jump, or if I’m just shooting poorly that day, etc. If this is a thing, would a 6.5 or 308 be more forgiving where I wouldn’t have to update my data?

Am I overthinking this?

Thanks for the help!

Ha! Mick you are leading right into my next post. I plan to speak specifically to the “How Much Does It Matter” side of this, so I’ll side-step that part of your questions for now, but I promise I plan to talk about it in the next post.

As far as how fast your velocity changes … like most things, it depends. It mostly depends on the cartridge, in my experience. I personally measure my velocity every 200-300 rounds. I clean my rifle and recheck my zero about that often also. I’m not saying that is “the rule” … but it’s my personal rhythm. Towards the end of a barrel’s life, it can start changing faster than that. I usually have an idea of about where I expect a barrel to go, and so when I start getting close to that I measure more frequently. If it’s ever off by 25 fps or more from what I expected it to be, that is the cue for me to screw off that barrel and screw on a new one. It doesn’t mean you can’t shoot it longer, but for me … a barrel is a commodity and you are just risking it going south during a match, and I can say from experience that sucks. If you drove 300 miles to a match, stayed a couple nights in a hotel, and your barrel became erratic during the match … you’ll kick yourself for not replacing it sooner. Been there, done that!

And I also agree with Dan Jarecke. He’s a smart guy and a outstanding shooter. If you ever hear me say something that contradicts Dan, I’d suggest you ignore me and go with what Dan says! 😉 If you know your dope and just adjust your velocity to match what the bullet is doing at distance … that works too! You don’t absolutely need a chronograph. Before the days of LabRadar’s and MagnetoSpeeds, I actually think most light-based chronographs were so bad (or at least prone to error) that adjusting your MV to your hits at distance was the only reliable way to do it. Litz has told me that most of the military actually doesn’t use chronographs or even have access to them out in the field … so they do exactly that. If you have a good BC value, then it should get you on out to whatever distance you trued it to.

In my experience, there is always a bit of fine-tuning to get your ballistics to really align with hits in the field. If I’ve measured my velocity with a LabRadar (and I know my MV is very consistent because of the precision of my loads), then I may actually adjust the BC and not the MV. There is a trial and error approach to this. I usually gather “truth data” about what adjustment it takes to center my shot on a target at various distances, and I also record all the environmental data in the moment of when I fired those shots. Then I usually go to a ballistic solver on my desktop and play around with the MV and BC until I can get the numbers to align as close as possible at all the distances. Sometimes it takes tweaking a little of both.

Of course, not with Doppler radar traces that is becoming less and less necessary. In fact, if you have a personalized Doppler drag curve, you shouldn’t have to do any of that in theory. You can read more on that here: Personalized Drag Models: The Final Frontier in Ballistics?

I will say that for most PRS matches, the distances aren’t far enough to have to worry about really fine-tuning your ballistics. It’s mostly Extreme Long Range (ELR) stuff that I do that for, where you might have to engage a target at 1500 yards and 3000 yards. If your curve isn’t right with that big of a range, you are just sending up a prayer! If you are shooting inside of 1000 yards, you typically won’t be more than 1-2 clicks off in either direction without much truing, and that is unlikely to cause you to miss most targets in PRS matches. Now if you are in the top 20% of shooters in the PRS (like Dan), yes it matters! But, for most of us (me included), I doubt I miss very many shots at all because I didn’t spend enough time truing my ballistics. I actually hope to do a massive ballistic engine test at some point to put that to the test and try to quantify how much it matters, but that is my gut based on my experience … which is tens of thousands of rounds fired at long range. Not saying I know everything, but I’m also not new to the this game either.

I would say the flatter shooting the bullet, the less you should have to worry about minor changes in muzzle velocity. So a 6.5 Creedmoor would always be a better choice over a 308, if you’re just ringing steel. The ballistics are more forgiving. A 6mm Creedmoor should theoretically be even more so, although I bet the difference is very minor and you’d have to be a good shooter to notice the difference in terms of the number of targets you’d hit.

And also, a lot of this is having the experience and confidence to know what you need to change and when. I bet if Dan misses low on more than a couple of targets, he will probably go adjust his muzzle velocity down a little bit in his Kestrel … and then he won’t think about it again over the course of the match. It’s just not a big deal to tweak stuff like that once you have some experience under your belt. Ultimately, the only way to get that is to get out and shoot some matches … and occasionally miss targets and learn from it. No amount of reading on the internet can help you gain that kind of experience and confidence that the bullet is going to go where you want it to. It is easy to overthink it. I’d just suggest to get out and try it. You will learn and have fun at the same time. Don’t fixate on things like that too long before you start going to matches. That is where you will learn the most and the fastest. Another tip is to try to get in a squad with guys who shoot better than you do. I have learned more from those kinds of matches than anything I’ve ever done, including some of the Long Range Universities I’ve attended, a stack of books I’ve read that is literally probably 3 feet tall on rifles and long range shooting, and any amount of practice at my private range. You can just see first hand how they handle stuff like that, and in my experience, most of the guys will help you get your stuff squared away. It’s a good community of shooters out there, with lots of great guys that want you to hit targets too, especially when you’re first starting off. We all know that feeling, and so we’re quick to help rookies get on target.

Thanks,

Cal

Great article Cal! I`ve been following you for quite a while and truly appreciate your work in advancing precision shooting. I built what is essentially an M40/A5 in 30.06 with a 26″ Rock Creek 5R barrel and I favor Seirra SMK`s in 175 and 190grs. After getting my loads down to under 1/4 MOA, I was able to improve that by playing with bullet jump. I am currently running my jump at .060″ and making 5 shot bugholes at 100yrds. I don`t compete in anything, just against myself. Plus I run an Elite Iron suppressor. Guys at the range come over and say what are you shooting and are impressed that it is an .06.

I`ve only shot 600 at the range, they do have 1000, but on my Uncles farm I was going out to 1280yrds and getting very good groups on a 16″ steel plate. Bullet jump does matter.

That’s awesome! I appreciate you sharing that. It’s guys like you that I had in mind when I was starting this blog in 2012. I just wanted to try to make it easier for other people to learn and progress in this sport that I’ve become so passionate about.

I really like that you picked a 30-06. That cartridge is a great all-around cartridge, but doesn’t get a lot of press … because it seems like many people either go smaller (308-sized case with various caliber bullets) or they go much larger (like the 300 Norma Mag). However, the 30-06 is a goldilocks cartridge that can do a lot of things well. Honestly, I’m still a hunter at heart, which is how I originally go into all this and cartridges like 308, 30-06, 300 Win Mag and 7mm Rem Mag are all standouts when you aren’t just ringing steel and need to consider that critical factor of terminal performance. The 30-06 is absolutely capable of going beyond 1000 yards, so it’s great you’ve been able to stretch it’s legs out. Not everyone has access to distances that long, so it’s cool that you do with a cartridge like that.

Thanks,

Cal

As a Novice … this shooting is “simply” done off of a Bipod, and ?

Hey, Fred. For stuff like this, most of us shoot off a bipod with a rear bag. What bipod and what rear bag varies, from something like a Harris or Atlas bipod and a small bag like SAP’s Run n’ Gun Bag or Armageddon Gear’s Game Changer to something more ELR or F-Class’ish like a Phoenix bipod and Edgewood rear bag.

95% of the time I use the former, but when I’m doing load development or testing something and want to ensure every shot I break is as perfect and centered as possible … I go with the Phoenix & Edgewood setup. Hope that helps.

Thanks,

Cal

Thanks … what about Hold … with that bunch of rifles? Tight or Loose … do you first have to “experiment,” or with these rifles does one typically “know” to use a lighter hold? Thanks … I only have a stock Ruger RPR in 6.5, which seems to like a lighter hold

I typically go with a relatively light hold on bolt actions, but not free recoiling like Benchrest shooters do. In my experience, I usually get the best precision from AR’s with a much tighter grip. But with bolt actions, I load the rifle (pushing into the butt with my shoulder) fairly light, and then my grip with my trigger hand is very, very loose and I don’t touch the rifle with my support hand. That’s at least how I shoot from a bench for precision. When I’m firing off a barricade at a competition, it’s similar … but sometimes you just have to improvise! 😉 That is where the strategy and gaming (in a good way) come in, and that makes the game even more fun for me.

Thanks,

Cal

Nice stuff, and thanks for the effort. All data is good data!!

As far as conditions go, as long as all the strings were fired in roughly the same conditions, and as long as there were enough data points involved, the “conditions” will normalize across all the groups and be less of a factor than you’d think.

Had you shot one string on one day in conditions X, and then the next string a week later in conditions Y, etc etc then “conditions” could have been a factor. Since you didn’t, and you shot them all roughly in the same conditions within a contained time period, I wouldn’t put any thought into the environment. I consider all the data valid, and it’s much appreciated.

Just an old statistician and engineer and physics guys opinion…

Thanks, Jim! I appreciate you sharing that.

Thanks,

Cal

Cal,

Can’t reply above, so…

I wasn’t trying to slight Mark’s methodology, or efforts. I *did* read the previous post about having the work reviewed by a statistician. Rather than dig myself in any further of a hole, I’ll just say that when I do a test, any test, particularly if the results are promising… I usually like to test again, on a different day, just to see if the results repeat, or not. Obviously Mark has done that, across multiple guns and calibers.

Thanks again, for the information.

Thanks … that IS how I shoot. I’m all Bolt Action. And Sorry for being Off Topic here …

Cal./Mark,

You bring a tear of joy to my heart … We’ve known about copper bullets behaving this way for years, but we have learned something regarding the application to traditional bullets. Stunned at how it has taken over 100 years for this stuff to come to light. Now we can seriously put some factoids to rest. I know these results are evidential for particular bullets and cartridges only, but I’m prepared to wager that future tests demonstrating different results will be anomalies. Anomalies to be sure, are sent to keep us on our toes and test us, but I seriously like your compass bearing

Thank you!

Steve Hurt

Ha! You bet, Steve. I appreciate you chiming in to let us know this corroborates the research data you guys have collected on solid bullets. I think that is very interesting!

Thanks,

Cal

I’m just a hunter but Barnes has suggested 0.050 off since I killed my first Cape Buff with one over 3 decades ago. Too much benchrest technique will cause big problems on a hot day.

Yep. Barnes has suggested jumping on their solid bullets for a while. I tried to load them like a traditional bullet when I first start reloading (i.e. close to the lands like all the reloading books say 😉 ), and it never would group for me … but the Federal Premium factory ammo would group outstanding with the same bullet. I never could understand why I couldn’t get it to shoot like that at the time, but I bet that factory ammo was jumping a mile! Funny thing to think back on now!

Thanks,

Cal

Is there the same situation on rimfire caliber, i mean .22LR ?

No idea on that one!

Anyone else have any experience with jump/freebore on 22LR?

So I have been following the recent string of post about bullet jump, and with all this new found info I have one question. When starting to develop a new load for a rifle where should you start for bullet jump when doing a ladder test? Would you start loading to SAAMI spec for OAL or load long into the lands? Just wondering if there’s a “sweet” spot of OAL to start loading at for a ladder test, then fine tune the bullet jump after once an acceptable velocity node is determined.

Hey, Brian. I plan to give those specific tips in the next post, and I’ve already started working on it. I will say that a lot of it comes from tips from Scott Satterlee, although I’d adapted a few things in light of all this data. You can listen to a podcast where Scott gives his recommendations for this here: https://moderndaysniper.podbean.com/e/mds-episode-0014-scott-satterlee-and-hand-loading/. Lots of great info there! Other than that, stay tuned. Within a week I’ll answer your question more directly.

Thanks,

Cal

I take issue with how some of this is presented. In particular, statements like:

“namely the two that are furthest apart. Where the other 16 shots hit in no way affects the extreme spread, which means we’re ignoring 88% of the shots fired!”

That’s simply not true. ES is a function dependent on number of shots. As number of shots increases, so does the ES almost always, up until it hits a physical limit. What ES does is remove the requirement for calculating a center, as it ignores the balance of the shots (whether more or less are tightly or closely dispersed)

The problem with ATC, and which isn’t addressed or accounted for at all, is that it depends on there being a center. That center is influenced by where the shots hit, and that center moves relative to other groups. That movement must be accounted for in your measurement, and discarding it in favor of the scalar average distance is no different from discarding ‘fliers’ in an ES measurement. Just from pure statistical chance, the centers will move based on bias in how the shots are laid out even if under more samples and more averages, those biases would be minimized.

You can see this if you mark shot ordering and run it through a tool that calculates centers. You will see the center walk all around the group even though the ATC might not change much. Where the center is relative to other samples is the other half of the data to make ATC valid, and I don’t see it in your measurement at all.

In addition, while you had a mathy guy review your results and conclude there is a trend (and there is, he’s right), statements like this:

“So, if I were trying to pick between these jumps, I’d go with the middle group because the ATC is 13% smaller than the 0.015-0.025” results, even though the ES is 2% bigger.” are not backed up by your data with only 18 samples per measure. That should read something like “ES is 2% bigger +/- 10% and is probably a statistically insignificant difference.”

“18 shots is such a huge sample size and likely larger than you really need”

18 is not a ‘huge’ sample size. 20 shots and less are still under a huge influence from randomness. I host and shoot a short range competition which takes a 2×10 average per entry (20 shots total) and have shot it literally dozens of times with the same rifle and ammo, same exact conditions (indoors). My results range from 0.9 to 0.55 MOA on any particular 10 shot group, and 0.8 to 0.7 on any 2×10 average. The 20 shot aggregates range from 0.7 to 0.9 MOA – a nearly 30% difference just between groups from nothing more than random chance.

For you to make any claim about something being 2% better, you need well over 100 shots accounted in each of your comparisons.

You can even see this in your own data.

If you look at that bottom chart, and group the areas that have a similar pattern (and should have a similar behavior because the jump is so small, per Brian Litz’s own description of bullet jump behavior), the standard deviation / average is 6% at a minimum and up to 14% for a pretty good set of samples. Any % below that 6% is totally suspect, and any % near or below that 14% is highly suspect.

This is exactly why I enable comments on the blog – I appreciate the well thought out correction by peers. I can see your points and don’t disagree. I actually went back and forth on what to say about ES, because while it is technically based on the location of 2 shots … it isn’t purely based on each shot because each shot you fire had a chance to be one of the outer shots. We know that is true because at least in general, the more shots you fire, the bigger your ES will get. That’s exactly why I didn’t throw out ES and only look at ATC. I think they both offer value. While other statistical measures might have more technical merit, those two are easy for even non-technical readers to understand what they physically represent.

And of course we’d always like larger sample sizes – except for the guy paying for the ammo and having to take the time and barrel life to shoot it! I still think if Mark would have fired 2 more rounds per group or even 20 more rounds per group it wouldn’t have changed the results in such a dramatic way that we’d make a different decision on which one we wanted to move forward with if we were doing this as load development on our own rifle. Maybe a short distance Benchrest shooter would, but not for those of us shooting PRS/NRL style long range matches or even Extreme Long Range matches … which is my focus and audience. I realize I am representing a pragmatic approach, and you might not agree with it from a purist perspective – but I think it’s a practical perspective that is helpful to most people reading this.

Ultimately, I’m trying to make this practical and approachable by non-math guys too – so there is a balance here of technical accuracy and it being understandable to guys who don’t have math/physics/engineering degrees. That is EXTREMELY hard to do, and I actually feel like I do it well – but it is only because I spend a ton of time working on that specifically. To try to help you understand what I mean, here is a direct quote from a comment several days ago on one of the articles in this series:

I hate that. I try hard to make this approachable and applicable to even the novice shooter who doesn’t have a technical background – and apparently I failed that reader. That means he didn’t get value from what I was trying so hard to communicate. So while I understand the merit of your points, and maybe I should word that differently (and I’ll try to think of a way to do that), if I included all the details and numbers to satisfy math guys like you, I’d lose guys like him.

Don’t misunderstand me. I really do appreciate your comments. They are well thought out and I appreciate you taking the time to communicate it. That really is why I enable comments on the blog, so that guys like you can correct me when I overstate something or might not explain it the right way. I believe in accountability, even for me! Ultimately, I’d rather PRB be shut down forever than to become a source of bad information. There are too many other websites out there that are doing that! 😉 I just wanted to try to explain my perspective and why I chose to write it the way I did. Hope you understand. Thanks again for taking the time to add to the discussion! I take it as a huge compliment that I’ve attracted sharp readers like you!

Thanks,

Cal

Math Guy–

I think that if you measured the mean radius from the point of aim rather than the point of impact, you’d satisfactorily address your concern with the moving center.

It’s a bit of a tangent, but it also raises the issue in my mind of how confident you are in a given zero. I think most people’s “zeroed” rifles are off by more than they will admit. Because to be really, really confident that a zero is within something like 0.2moa, it takes a massive sample compared to what people are willing to shoot.

Which means that there is a fundamental assumption at work that is demonstrably wrong. We tend to focus on group size (and ignore POA) because we think we can just take the group, click the scope a few times and magically it’s perfectly centered.

Only it isn’t. Because we don’t truly know the center of the group. And so the clicks we make aren’t as precise as we wish. And the “verification” of that zero is suspect because even a perfect zero might be “off” in our verification, and a bad zero might appear “on” because the distributions overlap.

Though it’s more tedious, there is a good way to know how many shots you need to shoot to have confidence in the true center of the group. But it involves calculating the center of the group after every shot and seeing how much the center moves as you add shots. The group is truly representative when you’ve fired enough shots into it and the center doesn’t move more than whatever amount you are concerned about. It converges.

Anyway, great comment thread to another superb PRB post.

Thanks, Justin. That’s an interesting way to think about zero and sample size. Makes sense to me! I appreciate you sharing your thoughts.

Thanks,

Cal

This is a neat idea, and the amount of work done is impressive, but I don’t think the results are statistically significant. Group size is a statistical process, so the sizes fluctuate randomly. The 18-shot program is essentially comparing sizes of 6-shots groups. To get an idea of the statistical fluctuations look at ammo or gun tests in American Rifleman magazine, where they measure the average of 5×5-shot groups. On average the largest of the 5 groups is 1.6x the smallest, statistically this can be much larger or smaller. So if you measure 5 jump values shooting one 5-shot group at each jump, you expect statistical fluctuations of 60% of more. The statistical precision of a 6-shot group is not appreciably better than a 5-sot group.

I’ve done Monte Carlo studies of group size distributions which reproduce the American Rifleman data and characterize the statistical precision of different schemes. I found that for shooting a single 5-shot group at each condition only factor of two differences in size are significant. The best compromise of shots fired vs. precision is the NRA protocol, the average size of 5×5-shot groups, which has +/-20% precision (1-sigma). I also looked at rms radius vs. extreme spread. rms radius fluctuates less than ES, but the number of shots which must be fired is not much different. If you have access to an electronic target then use mean radius, otherwise ES works fine, just fire a few more shots.

So I suggest a good procedure might be a variation of a suggestion Bryan Litz once made: measure 5×5 at 3 jumps e.g. .010″, .040″, and .080″. Differences greater than 20% are an indication (but not evidence) that one jump is better than another. 40% difference (2-signa) are evidence.

Very interesting, Gary. Those are some good points. I knew the standard 5 groups at 5 shots each, and I do appreciate when guys use that method … but hadn’t actually heard Bryan suggest to use that as the method to find bullet jump. I actually talked to Bryan about all this and he looked through my drafts of this series of posts before I started publishing, and he didn’t mention that. That is interesting.

While I completely see your point from a mathematical/statistical/sample size standpoint … the problem is that is sure a lot of rounds! 75 rounds of barrel life to establish a jump may give you statistically significant results, but I’m not sure it is practical for most people. If you are using a barrel that might give you 1300 rounds of accurate barrel life (like what I usually average on a 6mm Creedmoor barrel), and you use 75 rounds to establish jump and then maybe another 75 rounds to tune into the best powder charge … that is 12% of your barrel life. And I’d expect you might need to do some barrel break-in (or at least fire some rounds until the muzzle velocity stabilizes) before the results of those other 75 round tests are 100% repeatable/reliable. So if you are a real stickler to high sample sizes and trying to make all this perfect, you might be closer to 20% of the barrel life gone before you settle on a load. If a chambered and threaded barrel cost $800, then that portion of the barrel life cost you $160 + roughly $1/round for ammo totals over $300 … not to mention the time. I just wonder how many people are willing to tinker that much or waste that much barrel life. It seems like most people are looking for a more pragmatic approach that might not be as precise/repeatable, but still get you 80% of the benefit.

So from an academic perspective, I see your points and can’t disagree … from a practical/pragmatic standpoint, I’m not sure I personally see the benefit. But, one of the reasons I have enabled comments on my blog is because my perspective certainly isn’t the only one that matters! I really mean that. Gary, you are clearly a sharp guy who has spent a lot of time thinking about this, and I appreciate the thoughtful comments. Thank you for chiming into the conversation. I appreciate your insight. You certainly challenged me to think about this more.

Thanks,

Cal